Author

Updated

7 Nov 2025Form Number

LP1944PDF size

19 pages, 794 KBAbstract

The NVIDIA H200 Tensor Core GPU supercharges generative AI and high-performance computing (HPC) workloads with game-changing performance and memory capabilities.

This product guide provides essential presales information to understand the NVIDIA H200 GPU and their key features, specifications, and compatibility. This guide is intended for technical specialists, sales specialists, sales engineers, IT architects, and other IT professionals who want to learn more about the GPUs and consider their use in IT solutions.

Change History

Changes in the November 7, 2025 update:

- Added power cable information - Auxiliary power cables section

Introduction

The NVIDIA H200 Tensor Core GPU supercharges generative AI and high-performance computing (HPC) workloads with game-changing performance and memory capabilities. H200 is the newest addition to NVIDIA’s leading AI and high-performance data center GPU portfolio, bringing massive compute to data centers.

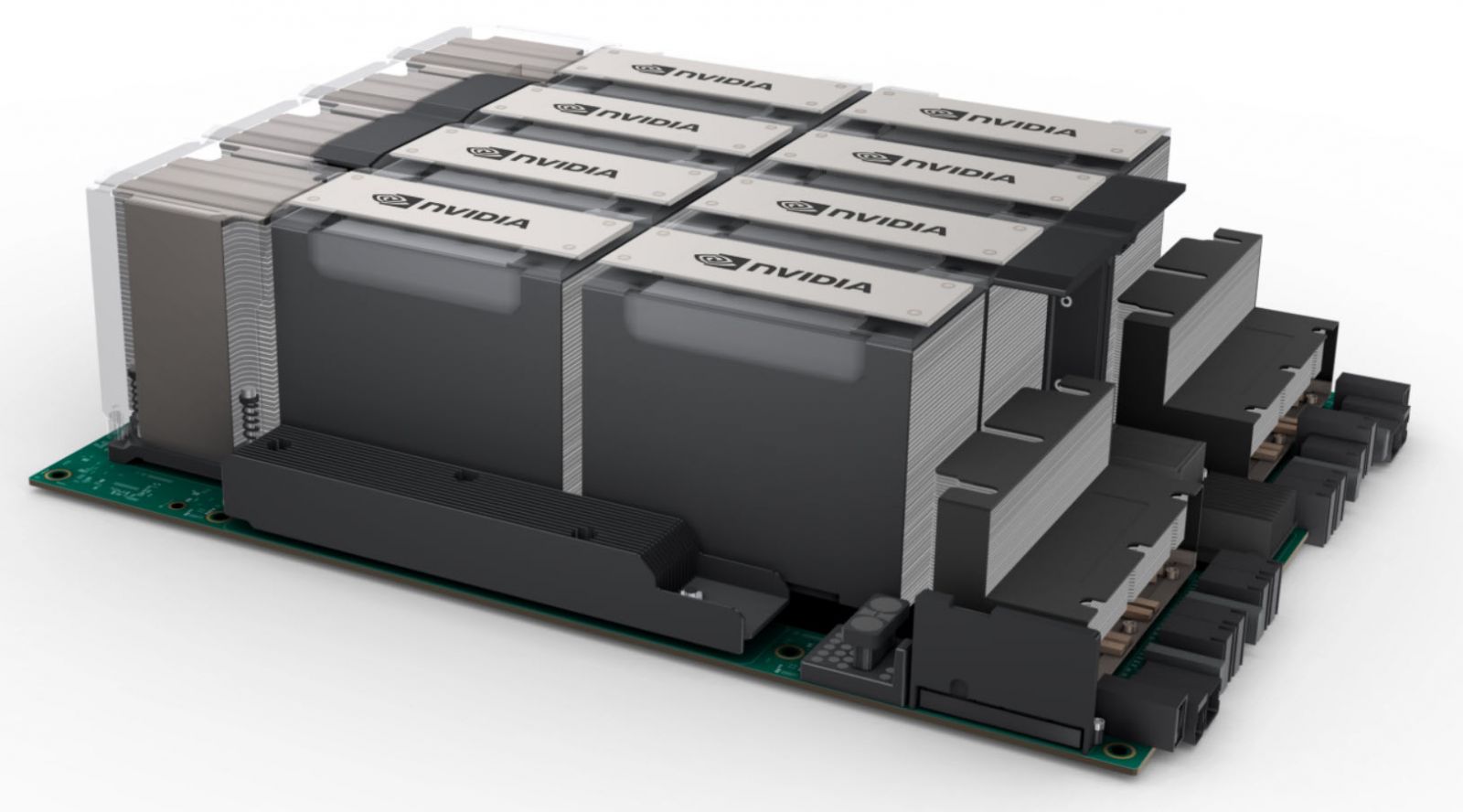

The NVIDIA H200 141GB 700W GPU is offered in either the SXM5 form factor or as a PCIe double-wide GPU adapter. Four or eight SXM5 GPU modules are implemented with a fully-connected NVLink topology in supported ThinkSystem servers. SXM5 GPUs are either air-cooled or water-cooled, depending on the server. PCIe double-wide GPUs are air-cooled, and can be implemented using 2-way or 4-way NVLink bridges.

Leveraging the power of H200 multi-precision Tensor Cores, an eight-way HGX H200 provides over 32 petaFLOPS of FP8 deep learning compute and over 1.1TB of aggregate HBM memory for the highest performance in generative AI and HPC applications.

Figure 1. ThinkSystem NVIDIA HGX H200 141GB 700W 8-GPU Board in the ThinkSystem SR680a V3 server

Did you know?

To maximize compute performance, H200 is the world’s first GPU with HBM3e memory with 4.8TB/s of memory bandwidth, a 1.4X increase over H100. H200 also expands GPU memory capacity nearly doubled to 141GB. The combination of faster and larger HBM memory accelerates performance of computationally intensive generative AI and HPC applications, while meeting the evolving demands of growing model sizes.

Part number information

The following table shows the part numbers for the 8-GPU and 4-GPU boards. The feature codes contain all H200 GPUs in the SXM form factor plus the NVLink high-speed interconnections between the GPUs.

The table also indicates which GPUs include a 5-year subscription to NVIDIA AI Enterprise Software (NVAIE).

* ThinkSystem NVIDIA H200 NVL 141GB PCIe GPU Gen5 Passive GPU, C3V3 includes a 5-year subscription to NVIDIA AI Enterprise Software (NVAIE). See the NVIDIA AI Enterprise Software section.

The NVIDIA H200 GPU is Controlled which means the GPU is not offered in certain markets, as determined by the US Government.

Features

The NVIDIA H200 Tensor Core GPU supercharges generative AI and HPC with game-changing performance and memory capabilities. As the first GPU with HBM3e, H200’s faster, larger memory fuels the acceleration of generative AI and LLMs while advancing scientific computing for HPC workloads.

NVIDIA HGX™ H200, the world’s leading AI computing platform, features the H200 GPU for the fastest performance. An eight-way HGX H200 provides over 32 petaflops of FP8 deep learning compute and 1.1TB of aggregate high-bandwidth memory for the highest performance in generative AI and HPC applications.

Key AI and HPC workload features:

- Unlock Insights With High-Performance LLM Inference

In the ever-evolving landscape of AI, businesses rely on large language models to address a diverse range of inference needs. An AI inference accelerator must deliver the highest throughput at the lowest TCO when deployed at scale for a massive user base. H200 doubles inference performance compared to H100 when handling LLMs such as Llama2 70B.

- Optimize Generative AI Fine-Tuning Performance

Large language models can be customized to specific business case needs with fine-tuning, low-rank adaptation (LoRA), or retrieval-augmented generation (RAG) methods. These methods bridge the gap between general pretrained results and task-specific solutions, making them essential tools for industry and research applications.

NVIDIA H200’s Transformer Engine and fourth-generation Tensor Cores speed up fine-tuning by 5.5X over A100 GPUs. This performance increase allows enterprises and AI practitioners to quickly optimize and deploy generative AI to benefit their business. Compared to fully training foundation models from scratch, fine-tuning offers better energy efficiency and the fastest access to customized solutions needed to grow business.

- Industry-Leading Generative AI Training

The era of generative AI has arrived, and it requires billion-parameter models to take on the paradigm shift in business operations and customer experiences.

NVIDIA H200 GPUs feature the Transformer Engine with FP8 precision, which provides up to 5X faster training over A100 GPUs for large language models such as GPT-3 175B. The combination of fourth-generation NVLink, which offers 900GB/s of GPU-to-GPU interconnect, PCIe Gen5, and NVIDIA Magnum IO™ software, delivers efficient scalability from small enterprise to massive unified computing clusters of GPUs. These infrastructure advances, working in tandem with the NVIDIA AI Enterprise software suite, make the NVIDIA H200 the most powerful end-to-end generative AI and HPC data center platform.

- Supercharged High-Performance Computing

Memory bandwidth is crucial for high-performance computing applications, as it enables faster data transfer and reduces complex processing bottlenecks. For memory-intensive HPC applications like simulations, scientific research, and artificial intelligence, H200’s higher memory bandwidth ensures that data can be accessed and manipulated efficiently, leading to up to a 110X faster time to results.

The NVIDIA data center platform consistently delivers performance gains beyond Moore’s Law. And H200’s breakthrough AI capabilities further amplify the power of HPC+AI to accelerate time to discovery for scientists and researchers working on solving the world’s most important challenges.

- Reduced Energy and TCO

In a world where energy conservation and sustainability are top of mind, the concerns of business leaders and enterprises have evolved. Enter accelerated computing, a leader in energy efficiency and TCO, particularly for workloads that thrive on acceleration, such as HPC and generative AI.

With the introduction of H200, energy efficiency and TCO reach new levels. This cutting-edge technology offers unparalleled performance, all within the same power profile as H100. AI factories and at-scale supercomputing systems that are not only faster but also more eco-friendly deliver an economic edge that propels the AI and scientific community forward. For at-scale deployments, H200 systems provide 5X more energy savings and 4X better cost of ownership savings over the NVIDIA Ampere architecture generation.

Key features of the Hopper architecture:

- NVIDIA H200 Tensor Core GPU

H200 is the world’s most advanced chip ever built. It features major advances to accelerate AI, HPC, memory bandwidth, interconnect, and communication at data center scale.

- Transformer Engine

The Transformer Engine uses software and Hopper Tensor Core technology designed to accelerate training for models built from the world’s most important AI model building block, the transformer. Hopper Tensor Cores can apply mixed FP8 and FP16 precisions to dramatically accelerate AI calculations for transformers.

- NVLink Switch System

The NVLink Switch System enables the scaling of multi-GPU input/output (IO) across multiple servers. The system delivers up to 9X higher bandwidth than InfiniBand HDR on the NVIDIA Ampere architecture.

- NVIDIA Confidential Computing

NVIDIA Confidential Computing is a built-in security feature of H100 and H200 GPUs where users can protect the confidentiality and integrity of their data and applications in use while accessing the GPUs.

- Second-Generation Multi-Instance GPU (MIG)

The Hopper architecture’s second-generation MIG supports multi-tenant, multi-user configurations in virtualized environments, securely partitioning the GPU into isolated, right-size instances to maximize quality of service (QoS) for 7X more secured tenants.

- DPX Instructions

Hopper’s DPX instructions accelerate dynamic programming algorithms by 40X compared to CPUs and 7X compared to NVIDIA Ampere architecture GPUs. This leads to dramatically faster times in disease diagnosis, real-time routing optimizations, and graph analytics.

Technical specifications

The following table lists the NVIDIA H200 GPU specifications.

* Without / with structural sparsity enabled

Server support

The following tables list the ThinkSystem servers that are compatible.

- Contains 8 separate GPUs connected via high-speed interconnects

Operating system support

For SXM GPUs, operating system support is based on that of the supported servers. See the SR680a V3 server product guide for details: https://lenovopress.lenovo.com/lp1909-thinksystem-sr680a-v3-server

The following tables list the OS support for the PCIe adapter.

1 H200 GPU is only supported on SR650a V4 (7DGDCTO2WW) but not supported on SR650 V4 (7DGDCTO1WW).

NVIDIA GPU software

This section lists the NVIDIA software that is available from Lenovo.

As listed in Table 1 in the Part number information section, the PCIe adapter version of the H200 includes a 5-year subscription to NVIDIA AI Enterprise Software (NVAIE).

NVIDIA Enterprise Software

Lenovo offers the NVIDIA Enterprise (NVAIE) cloud-native enterprise software. NVIDIA Enterprise is an end-to-end, cloud-native suite of AI and data analytics software, optimized, certified, and supported by NVIDIA to run on VMware vSphere and bare-metal with NVIDIA-Certified Systems™. It includes key enabling technologies from NVIDIA for rapid deployment, management, and scaling of AI workloads in the modern hybrid cloud.

NVIDIA Enterprise is licensed on a per-GPU basis. NVIDIA Enterprise products can be purchased as either a perpetual license with support services, or as an annual or multi-year subscription.

- The perpetual license provides the right to use the NVIDIA Enterprise software indefinitely, with no expiration. NVIDIA Enterprise with perpetual licenses must be purchased in conjunction with one-year, three-year, or five-year support services. A one-year support service is also available for renewals.

- The subscription offerings are an affordable option to allow IT departments to better manage the flexibility of license volumes. NVIDIA Enterprise software products with subscription includes support services for the duration of the software’s subscription license

The features of NVIDIA Enterprise Software are listed in the following table.

Note: Maximum 10 concurrent VMs per product license

The following table lists the ordering part numbers and feature codes.

Find more information in the NVIDIA Enterprise Sizing Guide.

NVIDIA HPC Compiler Software

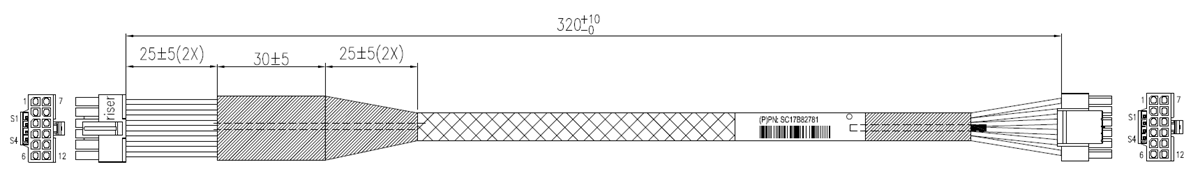

Auxiliary power cables

The power cables needed for the H200 SXM GPUs are included with the supported servers.

The H200 PCIe GPU option part number does not ship with auxiliary power cables. Cables are server-specific due to length requirements. For CTO orders, auxiliary power cables are derived by the configurator. For field upgrades, cables will need to be ordered separately as listed in the table below.

Regulatory approvals

The NVIDIA H200 GPU has the following regulatory approvals:

- RCM

- BSMI

- CE

- FCC

- ICES

- KCC

- cUL, UL

- VCCI

Seller training courses

The following sales training courses are offered for employees and partners (login required). Courses are listed in date order.

-

Lenovo VTT Cloud Architecture: Empowering AI Innovation with NVIDIA RTX Pro 6000 and Lenovo Hybrid AI Services

2025-09-18 | 68 minutes | Employees Only

DetailsLenovo VTT Cloud Architecture: Empowering AI Innovation with NVIDIA RTX Pro 6000 and Lenovo Hybrid AI Services

Join Dinesh Tripathi, Lenovo Technical Team Lead for GenAI and Jose Carlos Huescas, Lenovo HPC & AI Product Manager for an in-depth, interactive technical webinar. This session will explore how to effectively position the NVIDIA RTX PRO 6000 Blackwell Server Edition in AI and visualization workflows, with a focus on real-world applications and customer value.

Published: 2025-09-18

We’ll cover:

- NVIDIA RTX PRO 6000 Blackwell Overview: Key specs, performance benchmarks, and use cases in AI, rendering, and simulation.

- Positioning Strategy: How to align NVIDIA RTX PRO 6000 with customer needs across industries like healthcare, manufacturing, and media.

- Lenovo Hybrid AI 285 Services: Dive into Lenovo’s Hybrid AI 285 architecture and learn how it supports scalable AI deployments from edge to cloud.

Whether you're enabling AI solutions or guiding customers through infrastructure decisions, this session will equip you with the insights and tools to drive impactful conversations.

Tags: Industry solutions, SMB, Services, Technical Sales, Technology solutions

Length: 68 minutes

Course code: DVCLD227Start the training:

Employee link: Grow@Lenovo

-

Think AI Weekly: ISG & SSG Better Together: Uniting AI Solutions & Services for Smarter Outcomes

2025-08-01 | 55 minutes | Employees Only

DetailsThink AI Weekly: ISG & SSG Better Together: Uniting AI Solutions & Services for Smarter Outcomes

View this session to hear from our speakers Allen Holmes, AI Technologist, ISG and Balaji Subramaniam, AI Regional Leader-Americas, SSG.

Published: 2025-08-01

Topics include:

• An overview of ISG & SSG AI CoE Offerings with Customer Case Studies

• The Enterprise AI Deal Engagement Flow with ISG and SSG

• How sellers can leverage this partnership to differentiate with Enterprise clients.

• NEW COURSE: From Inception to Execution: Evolution of an AI Deal

Tags: Artificial Intelligence (AI), Sales, Services, Technology Solutions, TruScale Infrastructure as a Service

Length: 55 minutes

Course code: DTAIW145Start the training:

Employee link: Grow@Lenovo

-

Think AI Weekly: Third-Party Due Diligence Requirements for GPU Opportunities

2025-07-24 | 46 minutes | Employees Only

DetailsThink AI Weekly: Third-Party Due Diligence Requirements for GPU Opportunities

View this session to hear from Tanya Roychowdhury, Legal Counsel Director and Andrea Fazio, Third-party Due Diligence Project Manager as the explain:

Published: 2025-07-24

- What are the requirements?

- Why are they important?

- What this means to sales

Tags: Artificial Intelligence (AI), DataCenter Products, NVIDIA, Sales, Technical Sales

Length: 46 minutes

Course code: DTAIW143Start the training:

Employee link: Grow@Lenovo

-

ThinkSystem Supercomputing Servers Powered by NVIDIA

2025-06-27 | 30 minutes | Employees and Partners

DetailsThinkSystem Supercomputing Servers Powered by NVIDIA

This course offers you information about the Lenovo SC777 V4 Neptune server, the first Lenovo server to use an Arm processor from NVIDIA. By the end of this course, you’ll be able to list three features of the ThinkSystem SC777 V4 Neptune server, list three features of the ThinkSystem N1380 Neptune enclosure, describe two customer benefits of the ThinkSystem SC777 V4 Neptune server, and list four workload environments to which the SC777 V4 server is well suited.

Published: 2025-06-27

Tags: DataCenter Products, NVIDIA, ThinkSystem

Length: 30 minutes

Course code: SXXW2545Start the training:

Employee link: Grow@Lenovo

Partner link: Lenovo 360 Learning Center

-

VTT AI: NVIDIA and Lenovo: Data Center Platform Overview

2025-06-10 | 77 minutes | Employees Only

DetailsVTT AI: NVIDIA and Lenovo: Data Center Platform Overview

Please join this session to hear Steve Stein, Senior Product Marketing Manager, NVIDIA and Naman Malhotra, Senior Product Manager, Lenovo as they present these topics:

Published: 2025-06-10

•NVIDIA Accelerated Computing Portfolio

•Use Cases and Positioning

•Lenovo Platforms and Solutions

Tags: Artificial Intelligence (AI), Nvidia, Server

Length: 77 minutes

Course code: DVAI216Start the training:

Employee link: Grow@Lenovo

-

VTT AI: Introducing the Lenovo Hybrid AI 285 Platform April 2025

2025-04-30 | 60 minutes | Employees Only

DetailsVTT AI: Introducing the Lenovo Hybrid AI 285 Platform April 2025

The Lenovo Hybrid AI 285 Platform enables enterprises of all sizes to quickly deploy AI infrastructures supporting use cases as either new greenfield environments or as an extension to current infrastructures. The 285 Platform enables the use of the NVIDIA AI Enterprise software stack. The AI Hybrid 285 platform is the perfect foundation supporting Lenovo Validated Designs.

Published: 2025-04-30

• Technical overview of the Hybrid AI 285 platform

• AI Hybrid platforms as infrastructure frameworks for LVDs addressing data center-based AI solutions.

• Accelerate AI adoption and reduce deployment risks

Tags: Artificial Intelligence (AI), Nvidia, Technical Sales, Lenovo Hybrid AI 285

Length: 60 minutes

Course code: DVAI215Start the training:

Employee link: Grow@Lenovo

-

Lenovo Cloud Architecture VTT: Supercharge Your Enterprise AI with NVIDIA AI Enterprise on Lenovo Hybrid AI Platform

2025-04-17 | 75 minutes | Employees and Partners

DetailsLenovo Cloud Architecture VTT: Supercharge Your Enterprise AI with NVIDIA AI Enterprise on Lenovo Hybrid AI Platform

Join us for an in-depth webinar with Justin King, Principal Product Marketing Manager for Enterprise AI exploring the power of NVIDIA AI Enterprise, delivering Generative and Agentic AI outcomes deployed with Lenovo Hybrid AI platform environments.

Published: 2025-04-17

In today’s data-driven landscape, AI is evolving at high speed, with new techniques delivering more accurate responses. Enterprises are seeking not just an understanding but also how they can achieve AI-driven business outcomes.

With this, the demand for secure, scalable, and high-performing AI operations-and the skills to deliver them-is top of mind for many. Learn how NVIDIA AI Enterprise, a comprehensive software suite optimized for NVIDIA GPUs, provides the tools and frameworks, including NVIDIA NIM, NeMo, and Blueprints, to accelerate AI development and deployment while reducing risk-all within the control and security of your Lenovo customer’s hybrid AI environment.

Tags: Artificial Intelligence (AI), Cloud, Data Management, Nvidia, Technical Sales

Length: 75 minutes

Course code: DVCLD221Start the training:

Employee link: Grow@Lenovo

Partner link: Lenovo 360 Learning Center

-

AI VTT: GTC Update and The Lenovo LLM Sizing Guide

2025-03-12 | 86 minutes | Employees Only

DetailsAI VTT: GTC Update and The Lenovo LLM Sizing Guide

Please view this session that is two parts. Part one is Robert Daigle, Director, Global AI Solutions and Hande Sahin-Bahceci, AI Solutions Marketing Leader explaining the upcoming announcements for NVIDIA GTC. Part Two is Sachin Wani, AI Data Scientist explaining the Lenovo LLM Sizing Guide with these topics:

Published: 2025-03-12

• Minimum GPU requirements for fine-tuning/training and inference

• Gathering requirements for the customer's use case

• LLMs from a technical perspective

Tags: Artificial Intelligence (AI), Technical Sales

Length: 86 minutes

Course code: DVAI214Start the training:

Employee link: Grow@Lenovo

-

Partner Technical Webinar - NVIDIA Portfolio

2024-11-06 | 60 minutes | Employees and Partners

DetailsPartner Technical Webinar - NVIDIA Portfolio

In this 60-minute replay, Jason Knudsen of NVIDIA presented the NVIDIA Computing Platform. Jason talked about the full portfolio from GPUs to Networking to AI Enterprise and NIMs.

Published: 2024-11-06

Tags: Artificial Intelligence (AI), Nvidia

Length: 60 minutes

Course code: 110124Start the training:

Employee link: Grow@Lenovo

Partner link: Lenovo 360 Learning Center

-

Q2 Solutions Launch TruScale GPU Next Generation Management in the AI Era Quick Hit

2024-09-10 | 6 minutes | Employees and Partners

DetailsQ2 Solutions Launch TruScale GPU Next Generation Management in the AI Era Quick Hit

This Quick Hit focuses on Lenovo announcing additional ways to help you build, scale, and evolve your customer’s private AI faster for improved ROI with TruScale GPU as a Service, AI-driven systems management, and infrastructure transformation services.

Published: 2024-09-10

Tags: Artificial Intelligence (AI), Services, TruScale

Length: 6 minutes

Course code: SXXW2543aStart the training:

Employee link: Grow@Lenovo

Partner link: Lenovo 360 Learning Center

-

VTT AI: The NetApp AIPod with Lenovo for NVIDIA OVX

2024-08-13 | 38 minutes | Employees and Partners

DetailsVTT AI: The NetApp AIPod with Lenovo for NVIDIA OVX

AI, for some organizations, is out of reach, due to cost, integration complexity, and time to deployment. Previously, organizations relied on frequently retraining their LLMs with the latest data, a costly and time-consuming process. The NetApp AIPod with Lenovo for NVIDIA OVX combines NVIDIA-Certified OVX Lenovo ThinkSystem SR675 V3 servers with validated NetApp storage to create a converged infrastructure specifically designed for AI workloads. Using this solution, customers will be able to conduct AI RAG and inferencing operations for use cases like chatbots, knowledge management, and object recognition.

Published: 2024-08-13

Topics covered in this VTT session include:

•Where Lenovo fits in the solution

•NetApp AIPod with Lenovo for NVIDIA OVX Solution Overview

•Challenges/pain points that this solution solves for enterprises deploying AI

•Solution value/benefits of the combined NetApp, Lenovo, and NVIDIA OVX-Certified Solution

Tags: Artificial Intelligence (AI), Nvidia, Sales, Technical Sales, ThinkSystem

Length: 38 minutes

Course code: DVAI206Start the training:

Employee link: Grow@Lenovo

Partner link: Lenovo 360 Learning Center

-

Guidance for Selling NVIDIA Products at Lenovo for ISG

2024-07-01 | 25 minutes | Employees and Partners

DetailsGuidance for Selling NVIDIA Products at Lenovo for ISG

This course gives key talking points about the Lenovo and NVIDIA partnership in the Data Center. Details are included on where to find the products that are included in the partnership and what to do if NVIDIA products are needed that are not included in the partnership. Contact information is included if help is needed in choosing which product is best for your customer. At the end of this session sellers should be able to explain the Lenovo and NVIDIA partnership, describe the products Lenovo can sell through the partnership with NVIDIA, help a customer purchase other NVIDIA product, and get assistance with choosing NVIDIA products to fit customer needs.

Published: 2024-07-01

Tags: Artificial Intelligence (AI), Nvidia

Length: 25 minutes

Course code: DNVIS102Start the training:

Employee link: Grow@Lenovo

Partner link: Lenovo 360 Learning Center

-

Think AI Weekly: Lenovo AI PCs & AI Workstations

2024-05-23 | 60 minutes | Employees Only

DetailsThink AI Weekly: Lenovo AI PCs & AI Workstations

Join Mike Leach, Sr. Manager, Workstations Solutions and Pooja Sathe, Director Commercial AI PCs as they discuss why Lenovo AI Developer Workstations and AI PCs are the most powerful, where they fit into the device to cloud ecosystem, and this week’s Microsoft announcement, Copilot+PC

Published: 2024-05-23

Tags: Artificial Intelligence (AI), ThinkStation

Length: 60 minutes

Course code: DTAIW105Start the training:

Employee link: Grow@Lenovo

Related links

For more information, refer to these documents:

- ThinkSystem and ThinkAgile GPU Summary:

https://lenovopress.lenovo.com/lp0768-thinksystem-thinkagile-gpu-summary - ServerProven compatibility:

https://serverproven.lenovo.com/ - NVIDIA H200 product page:

https://www.nvidia.com/en-us/data-center/h200/ - NVIDIA Hopper Architecture page

https://www.nvidia.com/en-us/data-center/technologies/hopper-architecture/ - ThinkSystem SR680a V3 product guide

https://lenovopress.lenovo.com/lp1909-thinksystem-sr680a-v3-server

Trademarks

Lenovo and the Lenovo logo are trademarks or registered trademarks of Lenovo in the United States, other countries, or both. A current list of Lenovo trademarks is available on the Web at https://www.lenovo.com/us/en/legal/copytrade/.

The following terms are trademarks of Lenovo in the United States, other countries, or both:

Lenovo®

ServerProven®

ThinkAgile®

ThinkSystem®

The following terms are trademarks of other companies:

AMD is a trademark of Advanced Micro Devices, Inc.

Intel®, the Intel logo is a trademark of Intel Corporation or its subsidiaries.

Linux® is the trademark of Linus Torvalds in the U.S. and other countries.

Microsoft®, Windows Server®, and Windows® are trademarks of Microsoft Corporation in the United States, other countries, or both.

Other company, product, or service names may be trademarks or service marks of others.

Configure and Buy

Full Change History

Changes in the November 7, 2025 update:

- Added power cable information - Auxiliary power cables section

Changes in the July 28, 2025 update:

- Added NVIDIA part numbers - Part number information section

Changes in the March 25, 2025 update:

- The H200 supports Confidential Computing - Features section

Changes in the March 18, 2025 update:

- Added OS support for the ThinkSystem NVIDIA H200 NVL 141GB PCIe GPU Gen5 Passive GPU - Operating system support section

Changes in the March 3, 2025 update:

- Added the following H200 SXM5 offering in the ThinkSystem SR780a V3 server

- ThinkSystem NVIDIA HGX H200 141GB 700W 8-GPU Liquid Cooled Board, C2ER

Changes in the January 14, 2025 update:

- The following are now orderable as option part numbers - Part number information section:

- ThinkSystem NVIDIA H200 NVL 141GB PCIe GPU Gen5 Passive GPU, 4X67A97315

- ThinkSystem NVIDIA 2-way bridge for H200 NVL, 4X67A97320

- ThinkSystem NVIDIA 4-way bridge for H200 NVL, 4X67A97322

Changes in the December 16, 2024 update:

- Removed the vGPU and Omniverse software part numbers as not supported with the H200 GPUs - NVIDIA GPU software section

Changes in the December 11, 2024 update:

- ThinkSystem NVIDIA H200 NVL 141GB PCIe GPU Gen5 Passive GPU, C3V3 includes a 5-year subscription to NVIDIA AI Enterprise Software (NVAIE) - Part number information section

Changes in the November 14, 2024 update:

- Added the following DW PCIe adapter:

- ThinkSystem NVIDIA H200 NVL 141GB PCIe GPU Gen5 Passive GPU, C3V3

Changes in the October 10, 2024 update:

- Added the following 4-GPU board:

- ThinkSystem NVIDIA HGX H200 141GB 700W 4-GPU Board, C3V2

Changes in the September 15, 2023 update:

- Added the Controlled status column to Table 1 - Part number information section

First published: April 23, 2024

Course Detail

Employees Only Content

The content in this document with a is only visible to employees who are logged in. Logon using your Lenovo ITcode and password via Lenovo single-signon (SSO).

The author of the document has determined that this content is classified as Lenovo Internal and should not be normally be made available to people who are not employees or contractors. This includes partners, customers, and competitors. The reasons may vary and you should reach out to the authors of the document for clarification, if needed. Be cautious about sharing this content with others as it may contain sensitive information.

Any visitor to the Lenovo Press web site who is not logged on will not be able to see this employee-only content. This content is excluded from search engine indexes and will not appear in any search results.

For all users, including logged-in employees, this employee-only content does not appear in the PDF version of this document.

This functionality is cookie based. The web site will normally remember your login state between browser sessions, however, if you clear cookies at the end of a session or work in an Incognito/Private browser window, then you will need to log in each time.

If you have any questions about this feature of the Lenovo Press web, please email David Watts at dwatts@lenovo.com.

.png)