Author

Updated

26 Aug 2025Form Number

LP1834PDF size

77 pages, 9.3 MB- Introduction

- Did you know?

- Key features

- Components and connectors

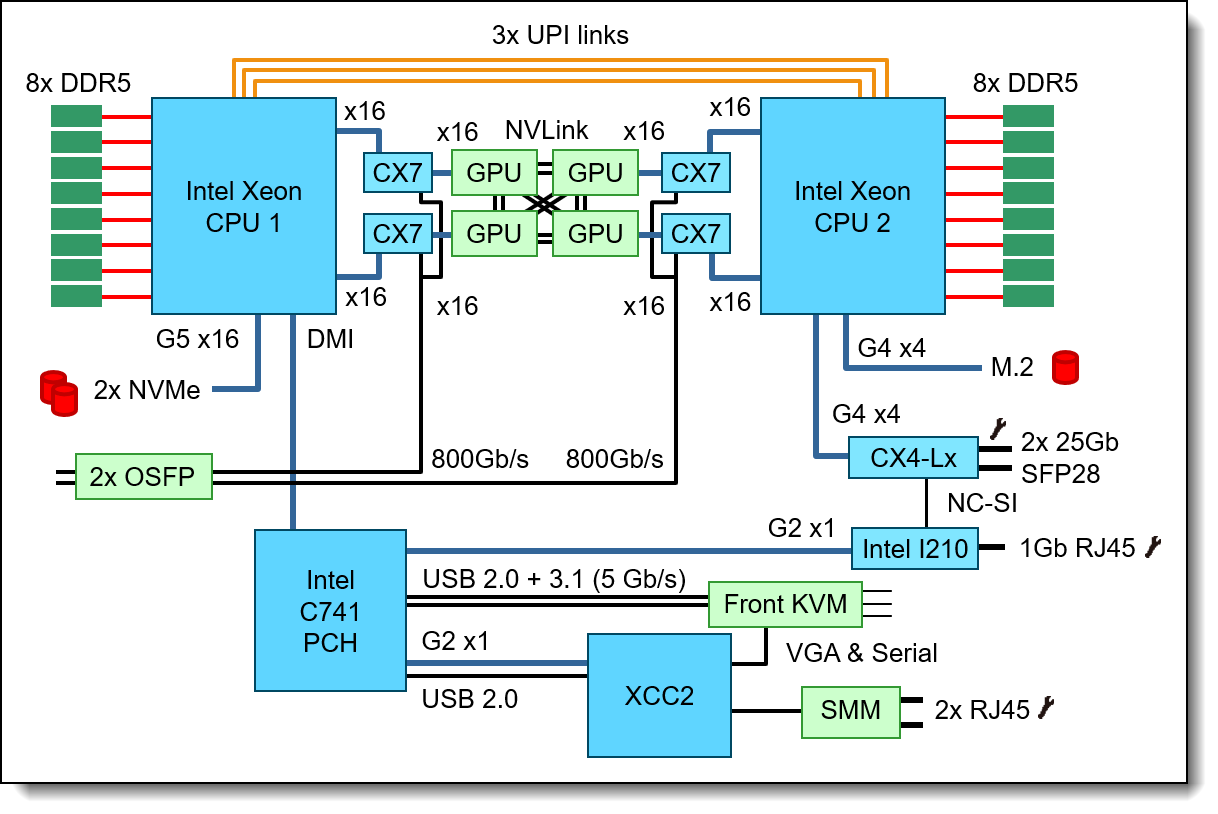

- System architecture

- Standard specifications - SD650-N V3 tray

- Standard specifications - DW612S enclosure

- Models

- Enclosure models

- Manifold assembly

- In-rack CDU assembly

- Processors

- Memory

- GPU accelerators

- Internal storage

- Controllers for internal storage

- Internal drive options

- Optical drives

- I/O expansion options

- Network adapters

- Storage host bus adapters

- Flash storage adapters

- Cooling

- Power supplies

- System Management

- Security

- Operating system support

- Physical and electrical specifications

- Operating environment

- Regulatory compliance

- Warranty upgrades and post-warranty support

- Services

- Lenovo TruScale

- Rack cabinets

- Lenovo Financial Services

- Seller training courses

- Related publications and links

- Related product families

- Trademarks

Abstract

The ThinkSystem SD650-N V3 Neptune DWC server is the next-generation high-performance server based on the fifth generation Lenovo Neptune™ direct water cooling platform.

With two 5th Gen Intel Xeon Scalable or Intel Max Series CPUs, along with four NVIDIA H100 SXM5 GPUs, the ThinkSystem SD650-N V3 server features the latest technology from Intel and NVIDIA, combined with Lenovo's market-leading water-cooling solution, which results in extreme performance in an extreme dense packaging.

This product guide provides essential pre-sales information to understand the SD650-N V3 server, its key features and specifications, components and options, and configuration guidelines. This guide is intended for technical specialists, sales specialists, sales engineers, IT architects, and other IT professionals who want to learn more about the SD650-N V3 and consider its use in IT solutions.

Change History

Changes in the August 26, 2025 update

- Added the following 15mm Trayless NVMe drives - Internal drive options section

- ThinkSystem 2.5" 15mm VA 1.6TB Mixed Use NVMe PCIe 4.0 x4 Trayless SSD, 4XB7B09660

- ThinkSystem 2.5" 15mm VA 3.2TB Mixed Use NVMe PCIe 4.0 x4 Trayless SSD, 4XB7B09661

- ThinkSystem 2.5" 15mm VA 6.4TB Mixed Use NVMe PCIe 4.0 x4 Trayless SSD, 4XB7B09662

- ThinkSystem 2.5" 15mm VA 1.92TB Read Intensive NVMe PCIe 4.0 x4 Trayless SSD, 4XB7B09657

- ThinkSystem 2.5" 15mm VA 3.84TB Read Intensive NVMe PCIe 4.0 x4 Trayless SSD, 4XB7B09658

- ThinkSystem 2.5" 15mm VA 7.68TB Read Intensive NVMe PCIe 4.0 x4 Trayless SSD, 4XB7B09659

- Added the following 7mm Trayless NVMe drives - Internal drive options section

- ThinkSystem 2.5" 7mm VA 960GB Read Intensive NVMe PCIe 4.0 x4 Trayless SSD, 4XB7B09654

- ThinkSystem 2.5" 7mm VA 1.92TB Read Intensive NVMe PCIe 4.0 x4 Trayless SSD, 4XB7B09655

- ThinkSystem 2.5" 7mm VA 3.84TB Read Intensive NVMe PCIe 4.0 x4 Trayless SSD, 4XB7B09656

- Added the following M.2 drives - Internal drive options section

- ThinkSystem M.2 VA 960GB Read Intensive NVMe PCIe 4.0 x4 NHS SSD, 4XB7B09651

- ThinkSystem M.2 VA 1.92TB Read Intensive NVMe PCIe 4.0 x4 NHS SSD, 4XB7B09652

Introduction

The ThinkSystem SD650-N V3 Neptune DWC node is the next-generation high-performance server based on the fifth generation Lenovo Neptune™ direct water cooling platform. With two 5th Gen Intel Xeon Scalable or Intel Xeon CPU Max Series processors, along with four NVIDIA H200 SXM5 GPUs, the ThinkSystem SD650-N V3 server features the latest technology from Intel and NVIDIA, combined with Lenovo's market-leading water-cooling solution, which results in extreme performance in an extreme dense packaging, supporting your application from Exascale to Everyscale™.

The direct water cooled solution is designed to operate by using warm water, up to 45°C (113°F) depending on the configuration. Chillers are not needed for most customers, meaning even greater savings and a lower total cost of ownership. The nodes are housed in the upgraded ThinkSystem DW612S enclosure, a 6U rack mount unit that fits in a standard 19-inch rack.

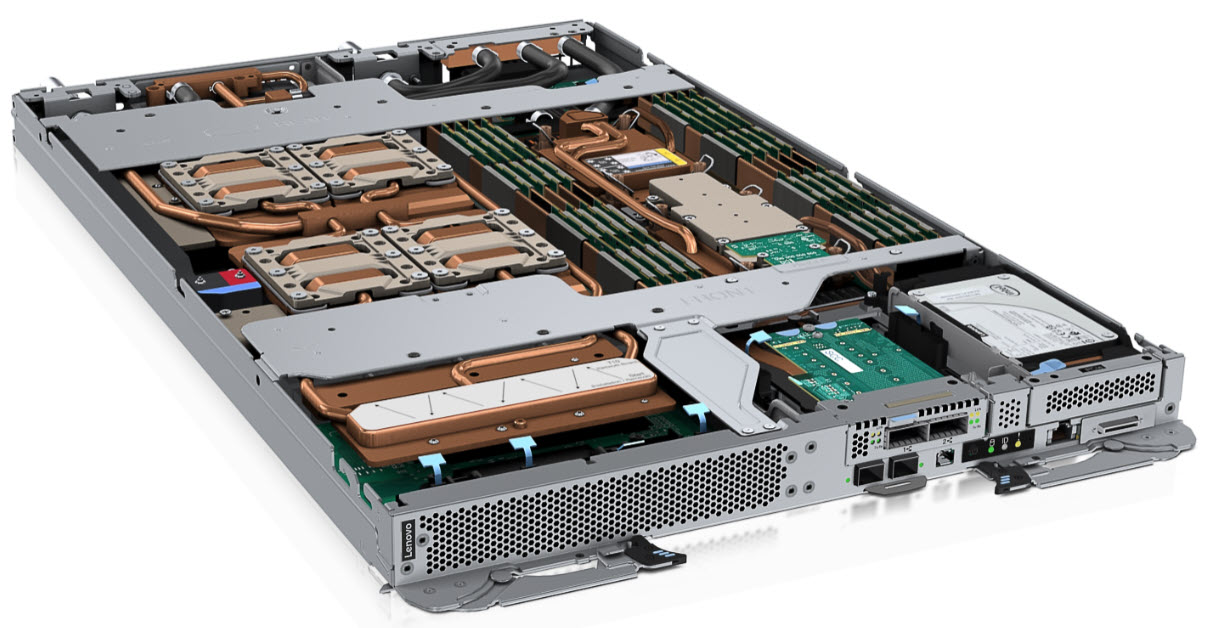

Figure 1. The ThinkSystem SD650-N V3 server tray with two processors and four NVIDIA H200 SXM5 GPUs

Did you know?

The ThinkSystem SD650-N V3 server tray and DW612S enclosure with direct water cooling provide the ultimate in data center cooling efficiencies and performance. On the SD650-N V3, four NVIDIA H200 SXM5 GPUs, interconnected using NVLink connections, deliver substantial performance improvements for High Performance Computing, Artificial Intelligence training and inference workloads.

Key features

The Lenovo ThinkSystem SD650-N V3 server tray is designed for High Performance Computing (HPC), large-scale cloud, heavy simulations, and modeling. It implements Lenovo Neptune™ Direct Water Cooling (DWC) technology to optimally support workloads from technical computing, grid deployments, analytics, and is ideally suited for fields such as research, life sciences, energy, simulation, and engineering.

The unique design of ThinkSystem SD650-N V3 provides the optimal balance of serviceability, performance, and efficiency. By using a standard rack with the ThinkSystem DW612S enclosure equipped with patented stainless steel drip-less quick connectors, the SD650-N V3 provides easy serviceability and extreme density that is well suited for clusters ranging from small enterprises to the world's largest supercomputers.

The Lenovo Neptune™ direct liquid cooling doesn't use risky plastic retrofitting but instead custom-designed copper water loops, so you have peace of mind implementing a platform with liquid cooling at the core of the design.

Compared to other technology, the SD650-N V3 direct water cooling:

- Reduces data center energy costs by up to 40%

- Increases system performance by up to 10%

- Delivers up to 100% heat removal efficiency (depending on the environment)

- Creates a quieter data center with its fan-less design

- Enables data center growth without adding computer room air conditioning

Lenovo’s direct water-cooled solutions are factory-integrated and are re-tested at the rack-level to ensure that a rack can be directly deployed at the customer site. This careful and consistent quality testing has been developed as a result of over a decade of experience designing and deploying DWC solutions to the very highest standards.

Scalability and performance

The ThinkSystem SD650-N V3 server tray and DW612S enclosure offer the following features to boost performance, improve scalability, and reduce costs:

- Each SD650-N V3 node supports two high-performance Intel Xeon processors, four NVIDIA H200 or H100 SXM GPUs, 16x TruDDR5 DIMMs, two OSFP 800G cages for high-speed I/O, and up to two drive bays, all in a 1U form factor.

- Up to 6x SD650-N V3 nodes are installed in the DW612S enclosure, occupying only 6U of rack space. It is a highly dense, scalable, and price-optimized offering.

- Supports two 5th Gen or 4th Gen Intel Xeon Processor Scalable processors

- Up to 64 cores and 128 threads

- Core speeds of up to 3.9 GHz

- TDP ratings of up to 385 W

- Supports two Intel Xeon CPU Max Series processors

- Integrated 64GB High Bandwidth Memory (HBM)

- Up to 56 cores and 112 threads

- Core speeds of up to 2.7 GHz

- TDP ratings of up to 350 W

- Supports four NVIDIA H200 or H100 GPUs

- 700W SXM5 GPUs with configurable EDP (Electrical Design Point)

- Up to 141GB HBM2e GPU memory per GPU

- Interconnected using dual NVLink 4.0 connections

- Up to 400 Gb/s NDR connectivity to each through four NVIDIA ConnectX-7 embedded network controllers

- Support for DDR5 memory DIMMs to maximize the performance of the memory subsystem:

- Up to 16 DDR5 memory DIMMs, 8 DIMMs per processor

- 8 memory channels per processor (1 DIMM per channel)

- Supports 1 DIMM per channel operating at 5600 MHz

- Using 128GB 3DS RDIMMs, the server supports up to 2TB of system memory

- Supports high-speed GPU Direct networking with dual InfiniBand NDRx2 800Gb/s connections

- Choice of two OSFP-DD or alternatively OSFP ports

- Each port supports OSFP 800G (2x400 Gb/s) or OSFP 400G (400 Gb/s) connectivity

- Direct connections to the GPUs - each OSFP port connects to two GPUs

- Supports up to two NVMe SSDs, as follows:

- Two E3.S EDSFF SSDs, or

- Two 7mm NVMe SSDs, or

- One 15mm NVMe SSD

- The server is Compute Express Link (CXL) v1.1 Ready. With CXL 1.1 for next-generation workloads, you can reduce compute latency in the data center and lower TCO. CXL is a protocol that runs across the standard PCIe physical layer and can support both standard PCIe devices as well as CXL devices on the same link.

- Drives are high-performance NVMe drives, to maximize I/O performance in terms of throughput, bandwidth, and latency.

- Supports a PCIe 4.0 x4 high-speed M.2 NVMe drive installed in an adapter for convenient operating system boot and internal storage functions.

- The node includes one Gigabit and two 25 Gb Ethernet onboard ports for cost effective networking.

- The node offers PCI Express 5.0 I/O expansion capabilities that doubles the theoretical maximum bandwidth of PCIe 4.0 (32GT/s in each direction for PCIe 5.0, compared to 16 GT/s with PCIe 4.0). A PCIe 5.0 x16 slot provides 128 GB/s bandwidth, enough to support a 400GbE network connection.

Energy efficiency

The direct water cooled solution offers the following energy efficiency features to save energy, reduce operational costs, increase energy availability, and contribute to a green environment:

- Water cooling eliminates power that is drawn by cooling fans in the enclosure and dramatically reduces the required air movement in the server room, which also saves power. In combination with an Energy Aware Runtime environment, savings as much as 40% are possible in the data center due to the reduced need for air conditioning.

- Water chillers may not be required with a direct water cooled solution. Chillers are a major expense for most geographies and can be reduced or even eliminated because the water temperature can now be 45°C instead of 18°C in an air-cooled environment.

- Up to 100% heat recovery is possible with the direct water cooled design, depending on water temperature chosen. Heat energy absorbed may be reused for heating buildings in the winter, or generating cold through Adsorption Chillers, for further operating expense savings.

- The processors and other microelectronics are run at lower temperatures because they are water cooled, which uses less power, and allows for higher performance through Turbo Mode.

- The processors are run at uniform temperatures because they are cooled in parallel loops, which avoid thermal jitter and provides higher and more reliable performance at same power.

- Low-voltage 1.1V DDR5 memory offers energy savings compared to 1.2V DDR4 DIMMs, an approximately 20% decrease in power consumption

- 80 Plus Titanium power supplies ensure energy efficiency.

- There are power monitoring and management capabilities through the System Management Module in the DW612S enclosure.

- Lenovo power/energy meter based on TI INA226 measures DC power for the CPU and the GPU board at higher than 97% accuracy and 100 Hz sampling frequency to the XCC and can be leveraged both in-band and out-of-band using IPMI raw commands.

- Optional Lenovo XClarity Energy Manager provide advanced data center power notification, analysis, and policy-based management to help achieve lower heat output and reduced cooling needs.

- Optional Energy Aware Runtime provides sophisticated power monitoring and energy optimization on a job-level during the application runtime without impacting performance negatively.

Manageability and security

The following powerful systems management features simplify local and remote management of the SD650-N V3 server:

- The server includes an XClarity Controller 2 (XCC2) to monitor server availability. Optional upgrade to XCC Platinum to provide remote control (keyboard video mouse) functions, support for the mounting of remote media files, FIPS 140-3 security, enhanced NIST 800-193 support, boot capture, power capping, and other management and security features.

- Support for industry standard management protocols, IPMI 2.0, SNMP 3.0, Redfish REST API, serial console via IPMI

- Integrated Trusted Platform Module (TPM) 2.0 support enables advanced cryptographic functionality, such as digital signatures and remote attestation.

- Supports Secure Boot to ensure only a digitally signed operating system can be used.

- Industry-standard Advanced Encryption Standard (AES) NI support for faster, stronger encryption.

- With the System Management Module (SMM) installed in the enclosure, only one Ethernet connection is needed to provide remote systems management functions for all SD650-N V3 servers and the enclosure.

- The SMM management module has two Ethernet ports which allows a single Ethernet connection to be daisy chained across 7 enclosures and 84 servers, thereby significantly reducing the number of Ethernet switch ports needed to manage an entire rack of SD650-N V3 servers and DW612S enclosures.

- The DW612S enclosure includes drip sensors that monitor the inlet and outlet manifold quick connect couplers; leaks are reported via the SMM.

- The server supports Lenovo XClarity suite software with Lenovo XClarity Administrator, Lenovo XClarity Provisioning Manager, and XClarity Energy Manager. They are described further in the Software section of this product guide.

- Lenovo HPC & AI Software Stack provides our HPC customers you with a fully tested and supported open-source software stack to enable your administrators and users with for the most effective and environmentally sustainable consumption of Lenovo supercomputing capabilities.

- Our Confluent management system and Lenovo Intelligent Computing Orchestration (LiCO) web portal provides an interface designed to abstract the users from the complexity of HPC cluster orchestration and AI workloads management, making open-source HPC software consumable for every customer.

- LiCO web portal provides workflows for both AI and HPC, and supports multiple AI frameworks, allowing you to leverage a single cluster for diverse workload requirements.

Availability and serviceability

The SD650-N V3 node and DW612S enclosure provide the following features to simplify serviceability and increase system uptime:

- Designed to run 24 hours a day, 7 days a week

- Depending on the configuration and node population, the DW612S enclosure supports N+1 power policies for its power supplies, which means greater system uptime.

- All supported power supplies are hot-swappable, including the water-cooled power supplies.

- Toolless cover removal on the trays provides easy access to upgrades and serviceable parts, such as adapters and memory.

- The server uses ECC memory and supports memory RAS features including Single Device Data Correction (SDDC, also known as Chipkill), Patrol/Demand Scrubbing, Bounded Fault, DRAM Address Command Parity with Replay, DRAM Uncorrected ECC Error Retry, On-die ECC, ECC Error Check and Scrub (ECS), and Post Package Repair.

- Proactive Platform Alerts (including PFA and SMART alerts): Processors, voltage regulators, memory, internal storage (HDDs and SSDs, NVMe SSDs, M.2 storage), fans, power supplies, and server ambient and subcomponent temperatures. Alerts can be surfaced through the XClarity Controller to managers such as Lenovo XClarity Administrator and other standards-based management applications. These proactive alerts let you take appropriate actions in advance of possible failure, thereby increasing server uptime and application availability.

- The XCC offers optional remote management capability and can enable remote keyboard, video, and mouse (KVM) control and remote media for the node.

- Built-in diagnostics in UEFI, using Lenovo XClarity Provisioning Manager, speed up troubleshooting tasks to reduce service time.

- Lenovo XClarity Provisioning Manager supports diagnostics and can save service data to a USB key drive or remote CIFS share folder for troubleshooting and reduce service time.

- Auto restart in the event of a momentary loss of AC power (based on power policy setting in the XClarity Controller service processor)

- Virtual reseat is a supported feature of the System Management Module (SMM2) which simulates physically removing the node from A/C power and reconnecting the node to AC power from a remote location.

- There is a three-year customer replaceable unit and onsite limited warranty, with next business day 9x5 coverage. Optional warranty upgrades and extensions are available.

- With water cooling, system fans are not required. This results in significantly reduced noise levels on the data center floor, a significant benefit to personnel having to work on site.

Components and connectors

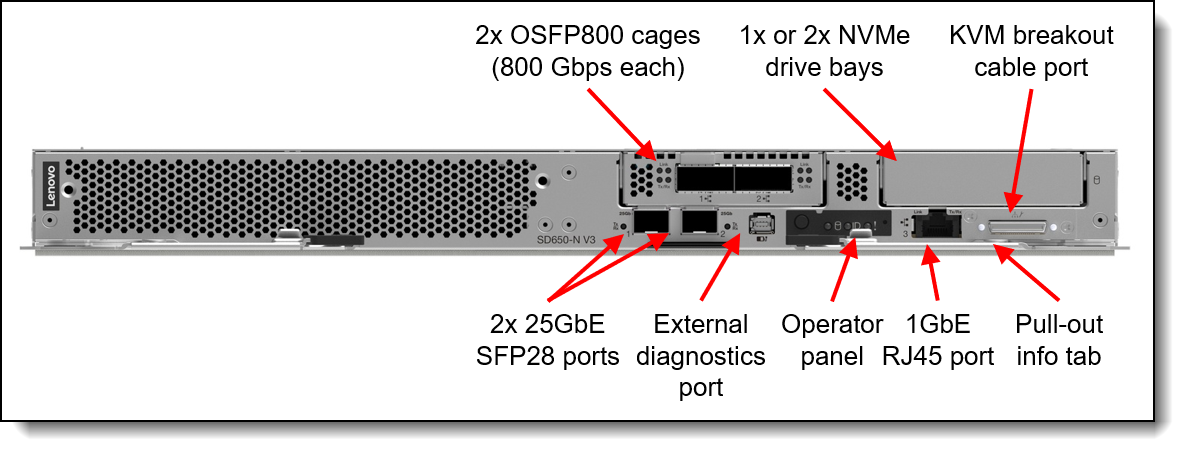

The front of the SD650-N V3 node is shown in the following figure.

Figure 2. Front view of the ThinkSystem SD650-N V3 node

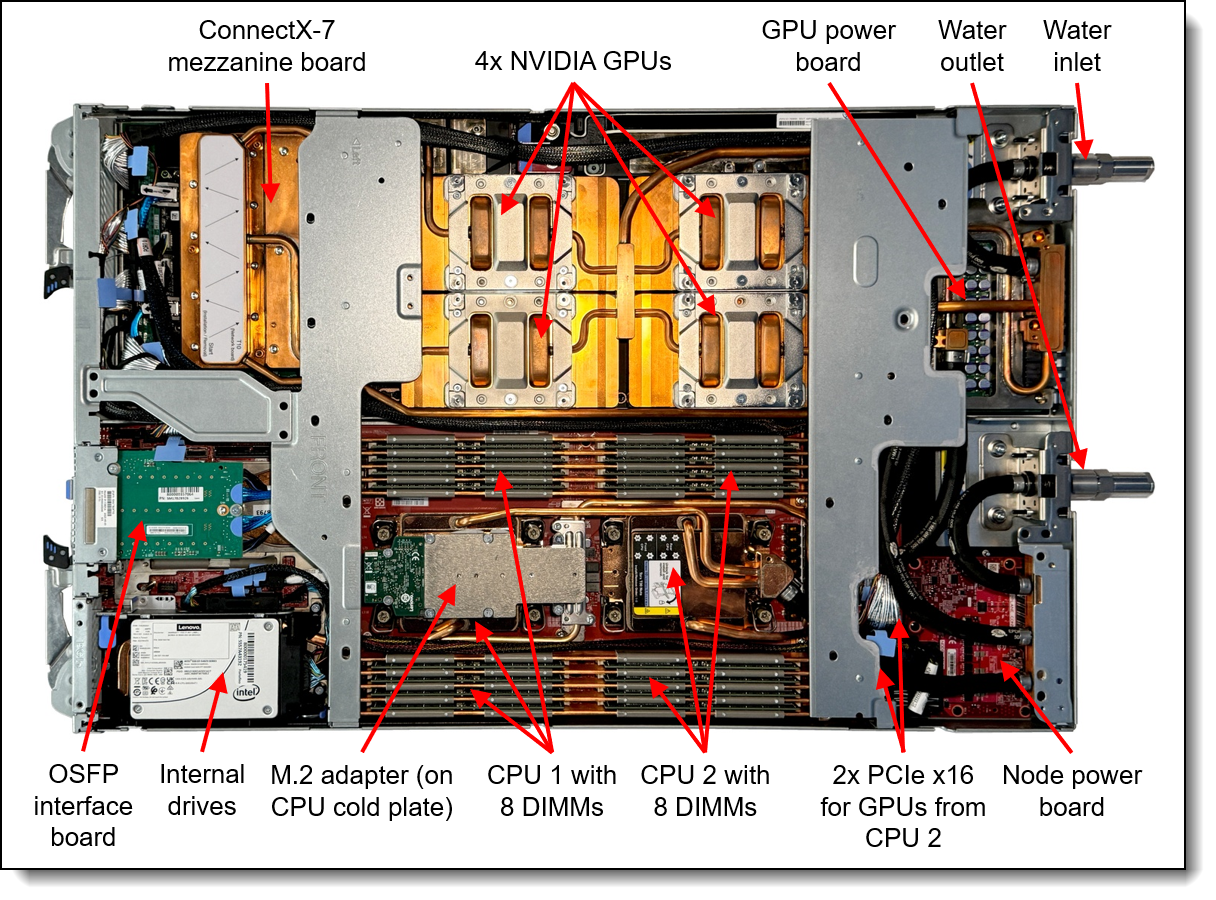

The following figure shows key components internal to the server tray.

Figure 3. Inside view of the SD650-N V3 node in the water-cooled tray

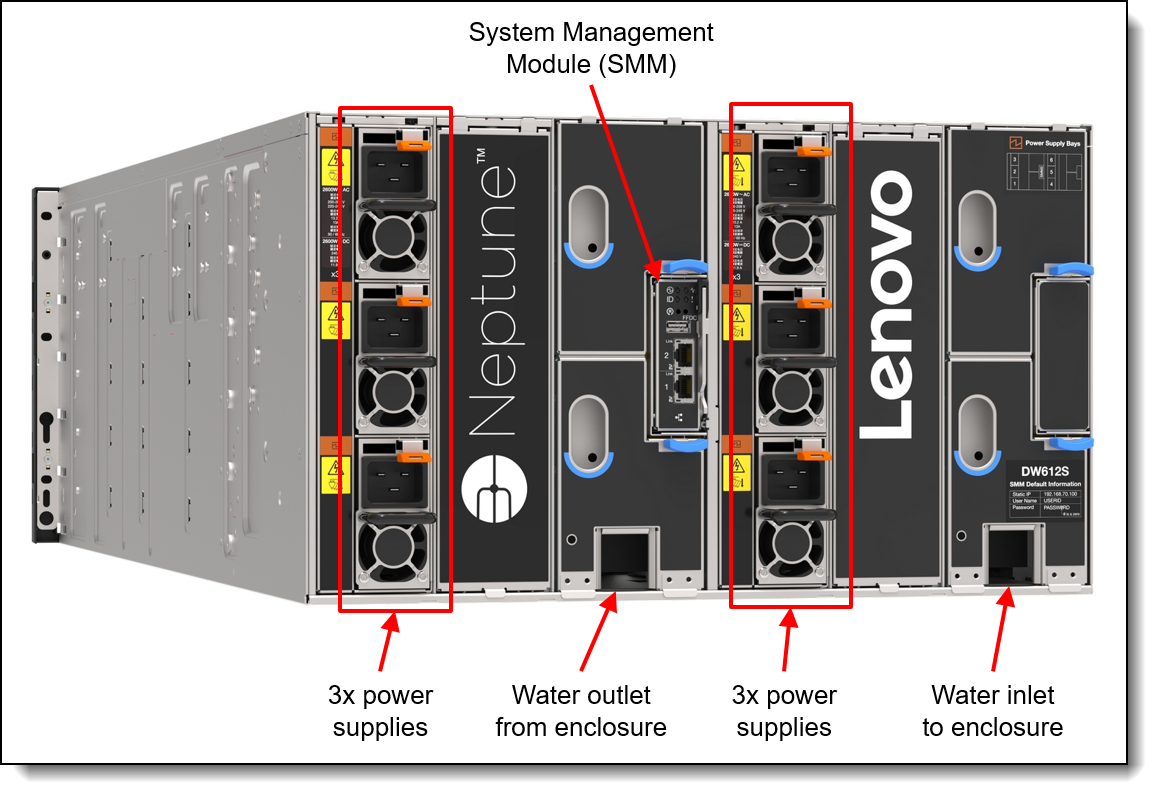

The compute nodes are installed in the ThinkSystem DW612S enclosure, as shown in the following figure.

The rear of the DW612S enclosure contains the power supplies, cooling water manifolds, and the System Management Module. The following figure shows rear of the enclosure with 6x air-cooled power supplies.

Figure 5. Rear view of the DW612S enclosure with 6 air-cooled power supplies

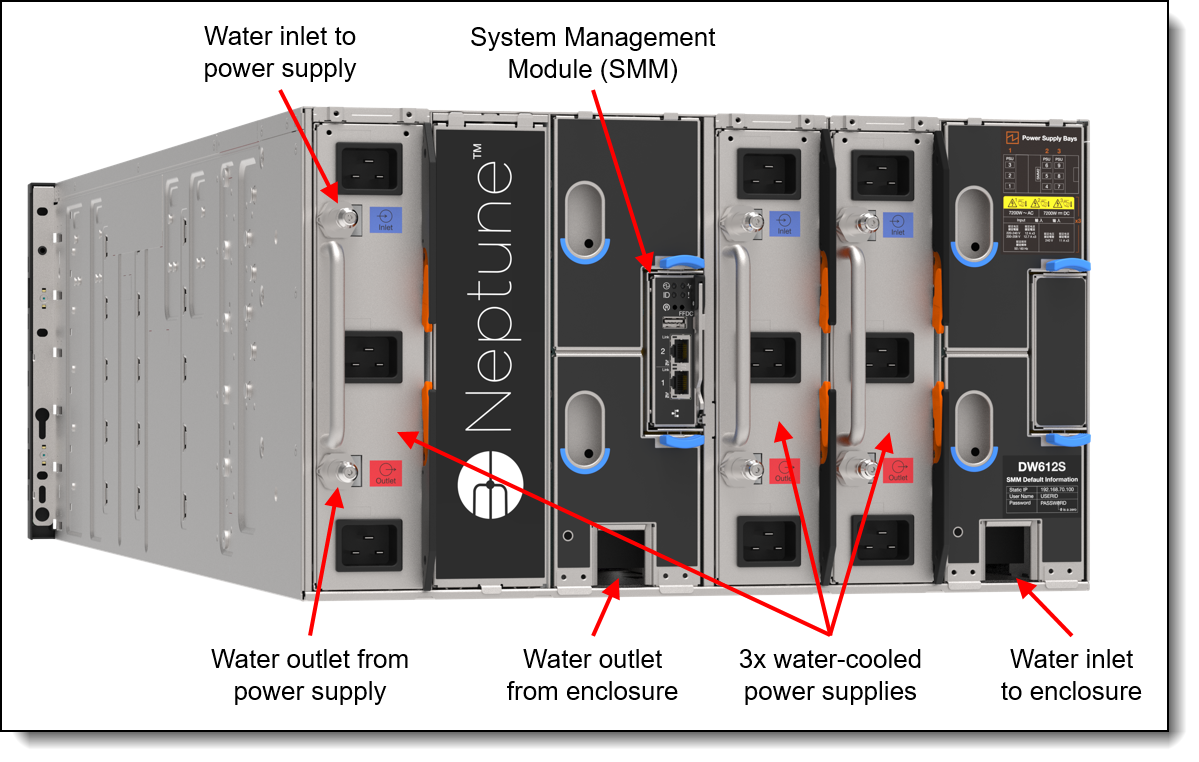

The also supports water-cooled power supplies for an increased level of heat removal using water. The following figure shows the enclosure with 3 water-cooled power supplies installed.

Figure 6. Rear view of the DW612S enclosure with 3 water-cooled power supplies

Standard specifications - SD650-N V3 tray

The following table lists the standard specifications of the SD650-N V3 server tray.

| Components | Specification |

|---|---|

| Machine type | 7D7N - 3-year warranty |

| Form factor | 1U server node mounted on a 1U water-cooled server tray |

| Enclosure support | ThinkSystem DW612S Neptune DWC Enclosure |

| Processor | Two 4th Gen Intel Xeon Scalable processors (formerly codenamed "Sapphire Rapids") or two Intel Xeon CPU Max Series processors (formerly codenamed "Sapphire Rapids HBM") per node. Supports processors up to 60 cores, core speeds of up to 3.7 GHz, and TDP ratings of up to 350W. Supports PCIe 5.0 for high performance connectivity to GPUs. |

| GPUs | NVIDIA HGX H200 or H100 4-GPU board - 4x GPUs interconnected using NVLink 4.0 links |

| Chipset | Intel C741 "Emmitsburg" chipset, part of the platform codenamed "Eagle Stream" |

| Memory | 16 DIMM slots with two processors (8 DIMM slots per processor) per node. Each processor has 8 memory channels, with 1 DIMM per channel (DPC). Lenovo TruDDR5 RDIMMs, 3DS RDIMMs, and 9x4 RDIMMs are supported, up to 4800 MHz |

| Persistent memory | Not supported |

| Memory maximum | Up to 2TB per node with 16x 128GB 3DS RDIMMs |

| Memory protection | ECC, SDDC, Patrol/Demand Scrubbing, Bounded Fault, DRAM Address Command Parity with Replay, DRAM Uncorrected ECC Error Retry, On-die ECC, ECC Error Check and Scrub (ECS), Post Package Repair |

| Disk drive bays |

Supports one of the following:

The node also supports one high-speed M.2 NVMe SSD with a PCIe 4.0 x4 connection, installed on an M.2 adapter mounted on top of the front processor |

| Maximum internal storage |

|

| Storage controllers |

Onboard NVMe (RAID functions using Intel VROC) |

| Optical drive bays | No internal bays; use an external USB drive. |

| Network interfaces | Optional 2x OSFP 800G connectors provide 800 Gb/s GPU Direct InfiniBand NDRx2 connectivity to four onboard NVIDIA ConnectX-7 controllers; 2x 25 Gb Ethernet SFP28 onboard connectors based on Mellanox ConnectX-4 Lx controller (support 10/25Gb); 1x 1 Gb Ethernet RJ45 onboard connector based on Intel I210 controller. Onboard 1Gb port and 25Gb port 1 can optionally be shared with the XClarity Controller 2 (XCC) management processor for Wake-on-LAN and NC-SI support. |

| PCIe slots | None. |

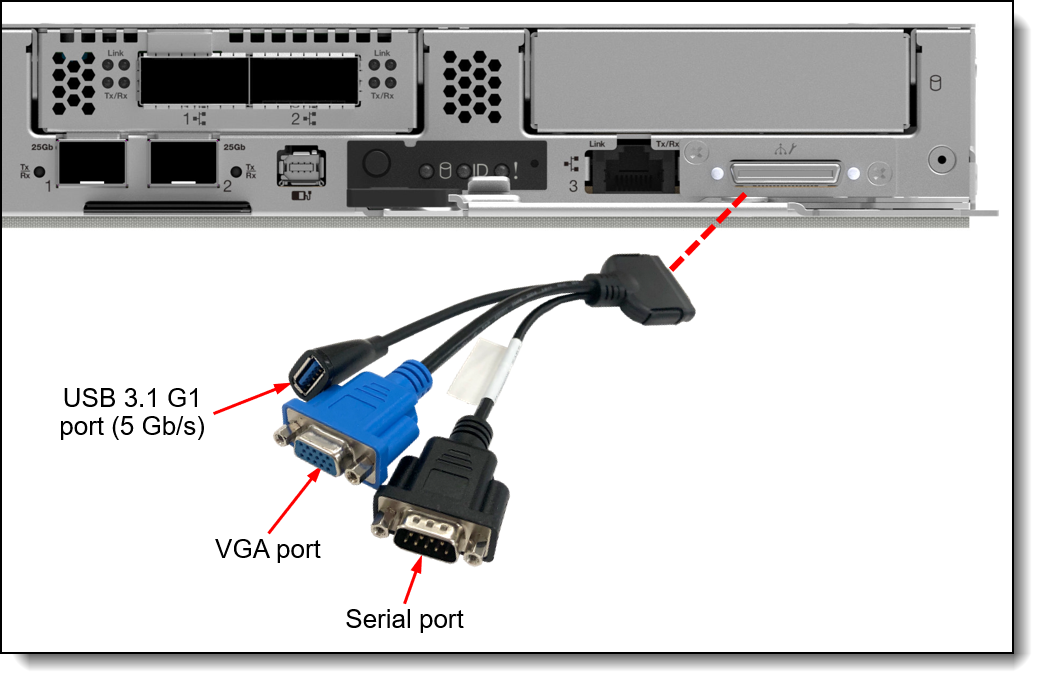

| Ports | External diagnostics port, console connector (for a breakout cable that provides one VGA port, one USB 3.1 (5 Gb/s) port and one DB9 serial port for local connectivity). Additional ports provided by the enclosure as described in the Enclosure specifications section. |

| Video | Embedded video graphics with 16 MB memory with 2D hardware accelerator, integrated into the XClarity Controller. Maximum resolution is 1920x1200 32bpp at 60Hz. |

| Security features | Power-on password, administrator's password, Trusted Platform Module (TPM), supporting TPM 2.0. In China only, optional Nationz TPM 2.0 plug-in module (support is planned). |

| Systems management |

Operator panel with status LEDs. Optional External Diagnostics Handset with LCD display. XClarity Controller 2 (XCC2) embedded management based on the ASPEED AST2600 baseboard management controller (BMC), XClarity Administrator centralized infrastructure delivery, XClarity Integrator plugins, and XClarity Energy Manager centralized server power management. Optional XCC Platinum to enable remote control functions and other features. Lenovo power/energy meter based on TI INA226 for 100Hz power measurements with >97% accuracy. System Management Module (SMM2) in the DW612S enclosure provides additional systems management functions. |

| Operating systems supported |

Red Hat Enterprise Linux, SUSE Linux Enterprise Server, and Ubuntu are Supported & Certified. Rocky Linux and AlmaLinux are Tested. See the Operating system support section for details and specific versions. |

| Limited warranty | Three-year customer-replaceable unit and onsite limited warranty with 9x5 next business day (NBD). |

| Service and support | Optional service upgrades are available through Lenovo Services: 4-hour or 2-hour response time, 6-hour fix time, 1-year or 2-year warranty extension, software support for Lenovo hardware and some third-party applications. |

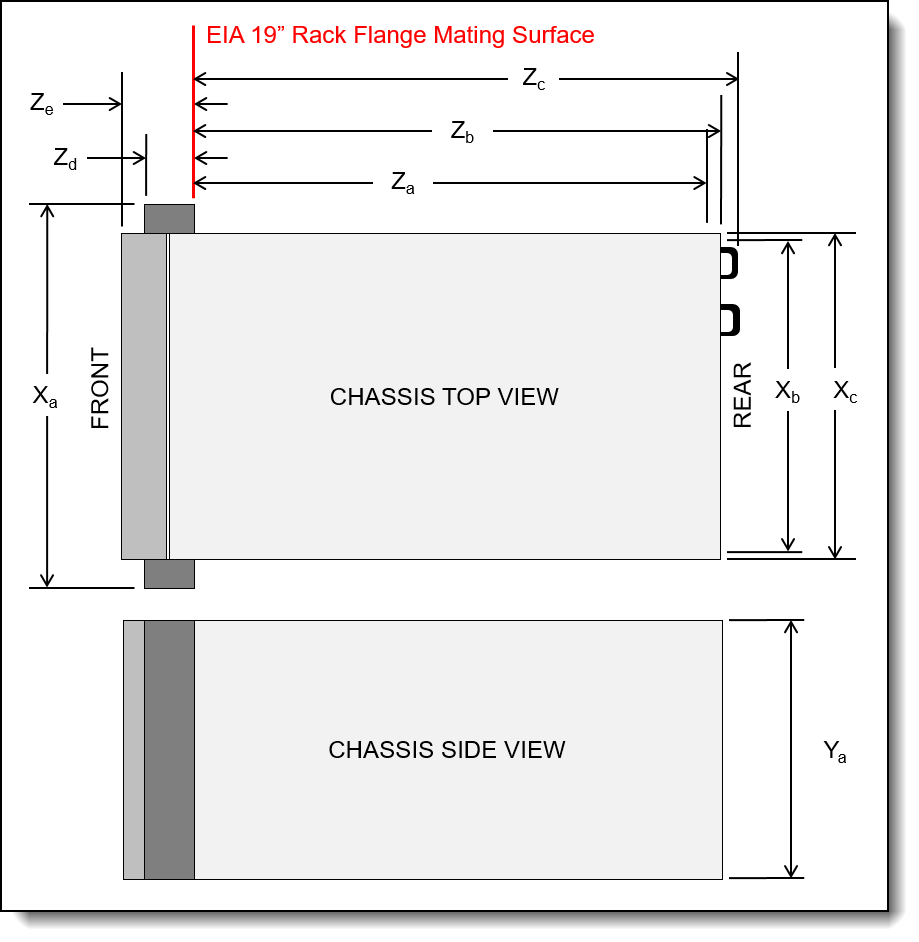

| Dimensions | Width: 438 mm (17.2 inches), height: 41 mm (1.6 inches), depth: 714 mm (28.1 inches) |

| Weight | 22.7 kg (50.05 lbs) |

Standard specifications - DW612S enclosure

The ThinkSystem DW612S enclosure provides shared high-efficiency power supplies. The SD650-N V3 servers connect to the midplane of the DW612S enclosure. This midplane connection is for power and control only; the midplane does not provide any I/O connectivity.

The following table lists the standard specifications of the enclosure.

| Components | Specification |

|---|---|

| Machine type | 7D1L - 3-year warranty |

| Form factor | 6U rack-mounted enclosure. |

| Maximum number of SD650-N V3 nodes supported | Up to 6x nodes per enclosure in 6x SD650-N V3 server trays (1 node per tray). |

| Node support | The DW612S supports all ThinkSystem V3 and V2 water-cooled systems (systems can coexist in the same DW612S enclosure). When mixing, install in the following order, from the bottom up: SD665-N V3, SD650-N V3, SD665 V3, SD650-I V3, SD650-N V2, SD650 V3, SD650 V2 |

| Enclosures per rack | Up to six DW612S enclosures per 42U rack and up to seven DW612S enclosures per 48U rack. |

| Midplane | Passive midplane provides connections to the nodes in the front to the power supplies and fans at the rear. Provides signals to control fan speed, power consumption, and node throttling as needed. |

| System Management Module (SMM) |

The hot-swappable System Management Module (SMM2) is the management device for the enclosure. Provides integrated systems management functions and controls the power and cooling features of the enclosure. Provides remote browser and CLI-based user interfaces for remote access via the dedicated Gigabit Ethernet port. Remote access is to both the management functions of the enclosure as well as the XClarity Controller (XCC) in each node. The SMM has two Ethernet ports which enables a single incoming Ethernet connection to be daisy chained across 7 enclosures and 84 nodes, thereby significantly reducing the number of Ethernet switch ports needed to manage an entire rack of SD650-N V3 nodes and enclosures. |

| Ports | Two RJ45 port on the rear of the enclosure for 10/100/1000 Ethernet connectivity to the SMM for power and cooling management. |

| I/O architecture | None integrated. Use top-of-rack networking and storage switches. |

| Power supplies | 6x or 9x air-cooled hot-swap power supplies, or 2x or 3x water-cooled hot-swap power supplies, depending on the power requirements of the installed server node trays. Power supplies installed at the rear of the enclosure. Single power domain supplies power to all nodes. Optional redundancy (N+1 or N+N) and oversubscription, depending on configuration and node population. Each power supply has an integrated fan. 80 PLUS Titanium or Platinum certified depending on the power supply. Built-in overload and surge protection. |

| Cooling | Direct water cooling supplied by water manifolds connected from the rear of the enclosure. |

| System LEDs | SMM has four LEDs: system error, identification, status, and system power. Each power supply has AC, DC, and error LEDs. Nodes have more LEDs. |

| Systems management | Browser-based enclosure management through an Ethernet port on the SMM at the rear of the enclosure. Integrated Ethernet switch provides direct access to the XClarity Controller (XCC) embedded management of the installed nodes. Nodes provide more management features. |

| Temperature |

See Operating Environment for more information. |

| Electrical power | 200 V - 240 V ac input (nominal), 50 or 60 Hz |

| Power cords | One C19 AC power cord for each air-cooled power supply Three C19 AC power cords for each water-cooled power supply |

| Limited warranty | Three-year customer-replaceable unit and onsite limited warranty with 9x5/NBD. |

| Dimensions | Width: 447 mm (17.6 in.), height: 264 mm (10.4 in.), depth: 933 mm (36.7 in.). See Physical and electrical specifications for details. |

| Weight |

|

Models

There are no standard SD650-N V3 models; all servers must be configured by using the configure-to-order (CTO) process with the Lenovo Cluster Solutions configurator (x-config). The ThinkSystem SD650-N V3 machine type is 7D7N.

The following table lists the base CTO model and base feature code.

Enclosure models

There are no standard models of the DW612S enclosure. All enclosures must be configured by using the CTO process. The machine type is 7D1L.

The following table lists the base CTO model and base feature code

Manifold assembly

The manifold provides the water supply and return to the DW612S Enclosure. It can be connected through the Eaton Ball Valves (Stainless steel V2A, Type FD83-2046-16-16) to a water loop in the data center that is connected to a centralized coolant distribution unit (CDU) or be ordered with an in-rack CDU.

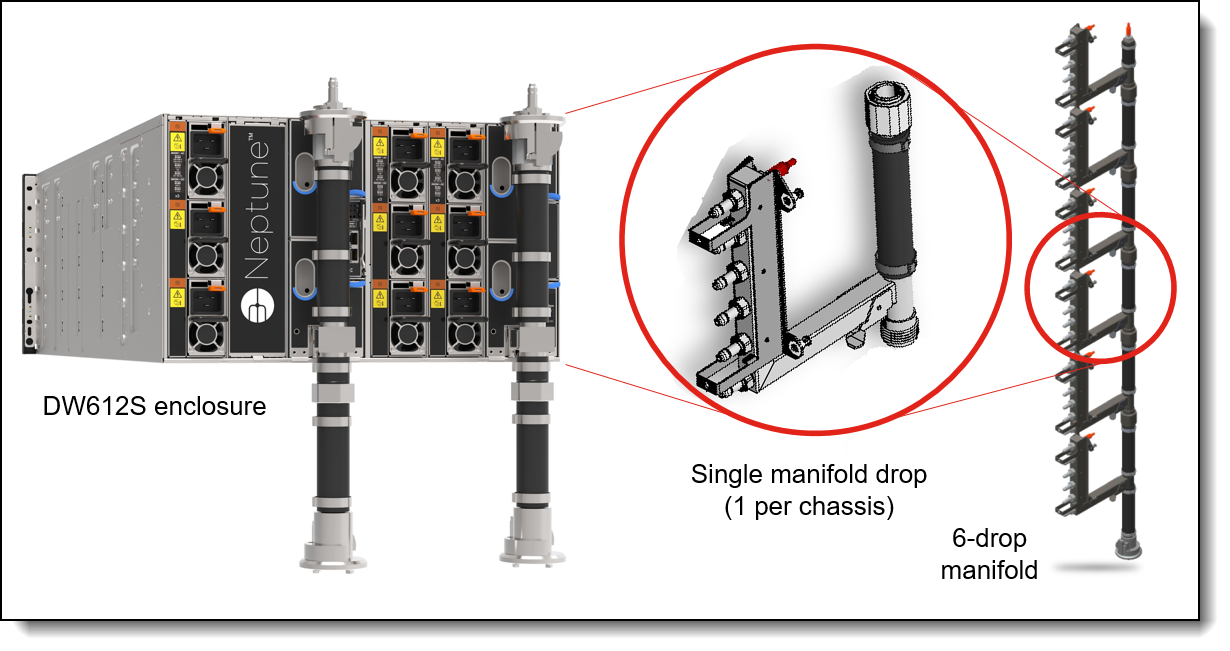

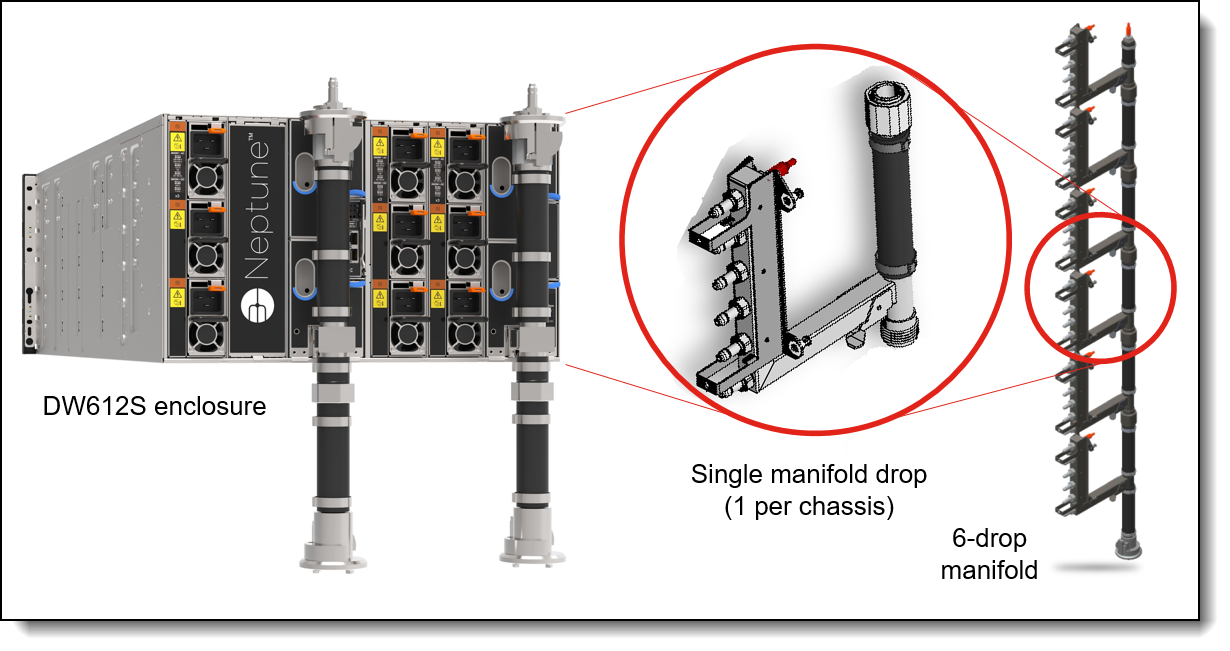

Figure 8. DW612S enclosure and manifold assembly

The manifold is ordered using the CTO process in the configurators using machine type 5469. The following table lists the base CTO model.

| Machine Type/Model | Description |

|---|---|

| 5469HC1 | Lenovo Neptune DWC Node Manifold |

The following table lists the base feature code for CTO configurations when connecting to a data center level water distribution. Select the correct feature code based on the number of enclosures installed in the rack. The feature code for the water-cooled power supplies (PSU) will be auto-derived when you select the PSUs in the configuration and is only supported with 6 Enclosures.

The following table lists the base feature code for CTO configurations when connecting to the in-rack CDU.

For additional information, see the Cooling section.

To support the onsite setup for the direct water-cooled solution, a Commissioning Kit is available providing a flow meter, bleed hose, pressure gauge and vent valve. Ordering information is listed in the following table. Either kit supports the DW612S enclosure.

In-rack CDU assembly

The RM100 In-Rack Coolant Distribution Unit (CDU) can provide 100kW cooling capacity within the rack cabinet. It is designed as a 4U high rack device installed at the bottom of the rack. The CDU is supported in the 42U and 48U Heavy Duty Rack Cabinets.

Rack support with the DW612S enclosure is as follows:

- 42U rack cabinet: In-Rack CDU with 5 enclosures; no support for water-cooled power supplies

- 48U rack cabinet: In-Rack CDU with 6 enclosures; supports water-cooled power supplies

For information about the 42U and 48U Heavy Duty Rack Cabinets, see the product guide:

https://lenovopress.lenovo.com/lp1498-lenovo-heavy-duty-rack-cabinets

The following figure shows the RM100 CDU.

Figure 9. RM100 In-Rack Coolant Distribution Unit

The CDU can be ordered using the CTO process in the configurators using machine type 7DBL. The following table lists the base CTO model and base feature code.

| CTO model | Base feature | Description |

|---|---|---|

| 7DBLCTOLWW | BRL4 | Lenovo Neptune DWC RM100 In-Rack CDU |

For details and exact specification of the CDU, see the In-Rack CDU Operation & Maintenance Guide:

https://pubs.lenovo.com/hdc_rackcabinet/rm100_user_guide.pdf

Professional Services: The factory integration of the In-Rack CDU requires Lenovo Professional Services review and approval for warranty and associated extended services. Before ordering CDU and manifold, contact the Lenovo Professional Services team ( ).

The following table lists additional feature codes for CTO configurations. They will be auto-derived when you select the in-Rack CDU for the configuration.

Processors

The SD650-N V3 node supports two processors as follows:

- Two 5th Gen Intel Xeon Scalable processors (formerly codenamed "Emerald Rapids")

- Two 4th Gen Intel Xeon Scalable processors (formerly codenamed "Sapphire Rapids")

- Two Intel Xeon Max Series processors (formerly codenamed "Sapphire Rapids HBM")

Note: A configuration of one processor is not supported.

Topics in this section:

Processor options

All supported processors have the following characteristics:

- 8 DDR5 memory channels at 1 DIMM per channel

- Up to 4 UPI links between processors at up to 20 GT/s

- Up to 80 PCIe 5.0 I/O lanes

Mainstream column: Processors marked as Mainstream can be configured in DCSC (select Full Mode) or in x-config. For processors marked as Extended, please contact your Lenovo sales representative.

The following table lists the 5th Gen processors that are currently supported by the SD650-N V3.

The following table lists the 4th Gen processors that are currently supported by the SD650-N V3.

Configuration notes:

- Single-processor configurations are not supported

Intel Xeon CPU Max Series processors

The SD650-N V3 server also supports Intel Xeon CPU Max Series processors which include 64GB of integrated High Bandwidth Memory (HBM2e) for a total of 128GB of memory. Intel Xeon Max processors support three different operating memory modes, configured via a setting in UEFI. You can specify, at time of order, which HBM mode you wish to enable, using the feature codes in the following table.

Tip: With Intel Xeon CPU Max processors, if your application has a small enough memory working set to fit entirely in less than 128GB, then it would be possible to not install any DDR5 memory DIMMs in the server.

The following table lists the Intel Xeon CPU Max Series processors supported by the SD650-N V3.

Processor features

Processors supported by the SD650-N V3 introduce new embedded accelerators to add even more processing capability:

- QuickAssist Technology (Intel QAT)

Help reduce system resource consumption by providing accelerated cryptography, key protection, and data compression with Intel QuickAssist Technology (Intel QAT). By offloading encryption and decryption, this built-in accelerator helps free up processor cores and helps systems serve a larger number of clients.

- Intel Dynamic Load Balancer (Intel DLB)

Improve the system performance related to handling network data on multi-core Intel Xeon Scalable processors. Intel Dynamic Load Balancer (Intel DLB) enables the efficient distribution of network processing across multiple CPU cores/threads and dynamically distributes network data across multiple CPU cores for processing as the system load varies. Intel DLB also restores the order of networking data packets processed simultaneously on CPU cores.

- Intel Data Streaming Accelerator (Intel DSA)

Drive high performance for storage, networking, and data-intensive workloads by improving streaming data movement and transformation operations. Intel Data Streaming Accelerator (Intel DSA) is designed to offload the most common data movement tasks that cause overhead in data center-scale deployments. Intel DSA helps speed up data movement across the CPU, memory, and caches, as well as all attached memory, storage, and network devices.

- Intel In-Memory Analytics Accelerator (Intel IAA)

Run database and analytics workloads faster, with potentially greater power efficiency. Intel In-Memory Analytics Accelerator (Intel IAA) increases query throughput and decreases the memory footprint for in-memory database and big data analytics workloads. Intel IAA is ideal for in-memory databases, open source databases and data stores like RocksDB, Redis, Cassandra, and MySQL.

- Intel Advanced Matrix Extensions (Intel AMX)

Intel Advanced Matrix Extensions (Intel AMX) is a built-in accelerator in all Silver, Gold, and Platinum processors that significantly improves deep learning training and inference. With Intel AMX, you can fine-tune deep learning models or train small to medium models in just minutes. Intel AMX offers discrete accelerator performance without added hardware and complexity.

The processors also support a separate and encrypted memory space, known as the SGX Enclave, for use by Intel Software Guard Extensions (SGX). The size of the SGX Enclave supported varies by processor model. Intel SGX offers hardware-based memory encryption that isolates specific application code and data in memory. It allows user-level code to allocate private regions of memory (enclaves) which are designed to be protected from processes running at higher privilege levels.

The following table summarizes the key features of all supported 5th Gen processors in the SD650-N V3.

† The maximum single-core frequency at with the processor is capable of operating

* L3 cache is 1.875 MB per core or larger. Processors with a larger L3 cache per core are marked with an *

The following table summarizes the key features of all supported 4th Gen processors in the SD650-N V3.

† The maximum single-core frequency at with the processor is capable of operating

* L3 cache is 1.875 MB per core or larger. Processors with a larger L3 cache per core are marked with an *

The following table summarizes the key features of all supported Intel Xeon CPU Max Series processors in the SD650-N V3.

† The maximum single-core frequency at with the processor is capable of operating

* L3 cache is 1.875 MB per core or larger. Processors with a larger L3 cache per core are marked with an *

Intel On Demand feature licensing

Intel On Demand is a licensing offering from Lenovo for certain 4th Gen and 5th Gen Intel Xeon Scalable processors that implements software-defined silicon (SDSi) features. The licenses allow customers to activate the embedded accelerators and to increase the SGX Enclave size in specific processor models as their workload and business needs change.

The available upgrades are the following:

- Up to 4x QuickAssist Technology (Intel QAT) accelerators

- Up to 4x Intel Dynamic Load Balancer (Intel DLB) accelerators

- Up to 4x Intel Data Streaming Accelerator (Intel DSA) accelerators

- Up to 4x Intel In-Memory Analytics Accelerator (Intel IAA) accelerators

- 512GB SGX Enclave, an encrypted memory space for use by Intel Software Guard Extensions (SGX)

See the Processor features section for a brief description of each accelerator and the SGX Enclave.

The following table lists the ordering information for the licenses. Accelerator licenses are bundled together based on the suitable workloads each would benefit with the additional accelerators.

Licenses can be activated in the factory (CTO orders) using feature codes, or as field upgrades using the option part numbers. With the field upgrades, they allow customers to only activate the accelerators or to increase the SGX Enclave size when their applications can best take advantage of them.

Intel On Demand is licensed on individual processors. For servers with two processors, customers will need a license for each processor and the licenses of the two processors must match. If customers add a second processor as a field upgrade, then you must ensure that the Intel On Demand licenses match the first processor.

Each license enables a certain quantity of embedded accelerators - the total number of accelerators available after activation is listed in the table. For example, Intel On Demand Communications & Storage Suite 4 (4L47A89451), once applied to the server, will result in a total of 4x QAT, 4x DLB and 4x DSA accelerators to be enabled the processor. The number of IAA accelerators is unchanged in this example.

The following table lists the 5th Gen processors that support Intel on Demand. The table shows the default accelerators and default SGX Enclave size, and it shows (with green highlight) what the total new accelerators and SGX Enclave would be once the Intel On Demand features have been activated.

The following table lists the 4th Gen processors that support Intel on Demand. The table shows the default accelerators and default SGX Enclave size, and it shows (with green highlight) what the total new accelerators and SGX Enclave would be once the Intel On Demand features have been activated.

Configuration rules:

- Not all processors support Intel On Demand upgrades - see the table for those that do not support Intel On Demand

- Upgrades can be performed in the factory (feature codes) or in the field (part numbers) but not both, and only one time

- Upgrades cannot be removed once activated

- SGX Enclave upgrades are independent of the accelerator upgrades; install either or both as desired

- For processors that support more than one upgrade, all upgrades must be performed at the same time

- Only one of each type of upgrade can be applied to a processor (eg 2x BX9A is not supported; 4x BX9B is not supported)

- The following processors support two accelerator upgrades, Intel On Demand Analytics Suite 4 (4L47A89452) and Intel On Demand Communications & Storage Suite 4 (4L47A89451); the table(s) above shows the accelerators based on both upgrades being applied.

- Intel Xeon Platinum 8460Y+

- Intel Xeon Platinum 8480+

- Intel Xeon Platinum 8568Y+

- Intel Xeon Platinum 8592+

- The number of accelerators listed for each upgrade is the number of accelerators that will be active one the upgrade is complete (ie the total number, not the number to be added)

- If a server has two processors, then two feature codes must be selected, one for each processor. The upgrades on the two processors must be identical.

- If a one-processor server with Intel On Demand features activated on it has a 2nd processor added as a field upgrade, the 2nd processor must also have the same features activated by purchasing the appropriate part numbers.

UEFI operating modes

The SD650-N V3 offers preset operating modes that affect energy consumption and performance. These modes are a collection of predefined low-level UEFI settings that simplify the task of tuning the server to suit your business and workload requirements.

The following table lists the feature codes that allow you to specify the mode you wish to preset in the factory for CTO orders.

UK and EU customers: For compliance with the ERP Lot9 regulation, you should select feature BFYE. For some systems, you may not be able to make a selection, in which case, it will be automatically derived by the configurator.

The preset modes for the SD650-N V3 are as follows:

- Maximum Performance Mode (feature BFYB): Achieves maximum performance but with higher power consumption and lower energy efficiency.

- Minimal Power Mode (feature BFYC): Minimize the absolute power consumption of the system.

- Efficiency Favoring Power Savings Mode (feature BFYD): Maximize the performance/watt efficiency with a bias towards power savings. This is the favored mode for SPECpower benchmark testing, for example.

- Efficiency Favoring Performance Mode (feature BFYE): Maximize the performance/watt efficiency with a bias towards performance. This is the favored mode for Energy Star certification, for example.

For details about these preset modes, and all other performance and power efficiency UEFI settings offered in the SD650-N V3, see the paper "Tuning UEFI Settings for Performance and Energy Efficiency on Intel Xeon Scalable Processor-Based ThinkSystem Servers", available from https://lenovopress.lenovo.com/lp1477.

Memory

The SD650-N V3 uses Lenovo TruDDR5 memory. When configured with 5th Gen Intel Xeon Scalable processors, the memory operates at up to 5600 MHz. When configured with 4th Gen processors, the memory operates at up to 4800 MHz. The server supports 16 DIMMs with 2 processors. The processors have 8 memory channels and support 1 DIMM per channel. The server supports up to 2TB of memory using 16x 128GB 3DS RDIMMs and two processors.

Lenovo TruDDR5 memory uses the highest quality components that are sourced from Tier 1 DRAM suppliers and only memory that meets the strict requirements of Lenovo is selected. It is compatibility tested and tuned to maximize performance and reliability. From a service and support standpoint, Lenovo TruDDR5 memory automatically assumes the system warranty, and Lenovo provides service and support worldwide.

The following table lists the 5600 MHz memory options that are currently supported by the SD650-N V3. These DIMMs are only supported with 5th Gen Intel Xeon processors.

The following table lists the 4800 MHz memory options that are currently supported by the SD650-N V3. These DIMMs are only supported with 4th Gen Intel Xeon processors. The 128GB 5600MHz RDIMM is also supported with 4th Gen processors.

9x4 RDIMMs (also known as EC4 RDIMMs) are a new lower-cost DDR5 memory option supported in ThinkSystem V3 servers. 9x4 DIMMs offer the same performance as standard RDIMMs (known as 10x4 or EC8 modules), however they support lower fault-tolerance characteristics. Standard RDIMMs and 3DS RDIMMs support two 40-bit subchannels (that is, a total of 80 bits), whereas 9x4 RDIMMs support two 36-bit subchannels (a total of 72 bits). The extra bits in the subchannels allow standard RDIMMs and 3DS RDIMMs to support Single Device Data Correction (SDDC), however 9x4 RDIMMs do not support SDDC. Note, however, that all DDR5 DIMMs, including 9x4 RDIMMs, support Bounded Fault correction, which enables the server to correct most common types of DRAM failures.

For more information on DDR5 memory, see the Lenovo Press paper, Introduction to DDR5 Memory, available from https://lenovopress.com/lp1618.

Tip: The SD650-N V3 server supports two Intel Xeon Max Series processors which each include 64GB of integrated High Bandwidth Memory (HBM2e) for a total of 128GB of memory. With Xeon Max Series processors, if your application has a small enough memory working set to fit entirely in less than 128GB, then it would be possible to not install any DDR5 memory DIMMs in the server.

The following rules apply when selecting the memory configuration:

- In DCSC, 4800 MHz memory can only be selected with 4th Gen Intel Xeon Scalable processors and Intel Max Series processors, and 5600 MHz memory can only be selected with 5th Gen Intel Xeon Scalable processors.

- An exception to the above rule is the following 5600 MHz DIMM which is supported with both 4th Gen and 5th Gen processors (operates at up to 4800 MHz with 4th Gen processors):

- ThinkSystem 128GB TruDDR5 5600MHz (2Rx4) RDIMM, 4X77A93887

- With Intel Xeon Scalable processors, the SD650-N V3 only supports quantities of 16 DIMMs with two processors installed; other quantities not supported

- With Intel Max Series processors, the SD650-N V3 only supports quantities of 0, 8, or 16 DIMMs with two processors installed; other quantities not supported

- The server supports three types of DIMMs: 9x4 RDIMMs, RDIMMs, and 3DS RDIMMs; UDIMMs and LRDIMMs are not supported

- All memory DIMMs must be identical part numbers

- Memory mirroring is not supported with 9x4 DIMMs

- The memory channels will operate at either the speed of the memory DIMMs installed, or the speed of the processor's memory bus, whichever is lower

- All supported processors support DIMMs with 16Gb DRAM technology - see the DRAM technology column in the above tables.

- All supported processors support DIMMs with 24Gb DRAM technology, except the following:

- The following 4th Gen processors: 6426Y, 6434, 6442Y, 6444Y, 6448Y, 6458Q

- All Max Series processors

For best performance, consider the following:

- Ensure the memory installed is at least the same speed as the memory bus of the selected processor.

- Populate all 8 memory channels.

The following memory protection technologies are supported:

- ECC detection/correction

- Bounded Fault detection/correction

- SDDC (for 10x4-based memory DIMMs; look for "x4" in the DIMM description)

- ADDDC (for 10x4-based memory DIMMs, not supported with 9x4 DIMMs)

- Memory mirroring

See the Lenovo Press article, RAS Features of the Lenovo ThinkSystem Intel Servers for more information about memory RAS features.

If memory channel mirroring is used, then DIMMs must be installed in pairs (minimum of one pair per processor), and both DIMMs in the pair must be identical in type and size. 50% of the installed capacity is available to the operating system.

Memory rank sparing is implemented using ADDDC/ADC-SR/ADDDC-MR to provide DRAM-level sparing feature support.

GPU accelerators

A key feature of the SD650-N V3 is the integration of a 4x SXM4 GPU complex on the left half of the server as shown in the Components and connectors section. The server supports four NVIDIA HGX H200 or H100 GPU modules that are connected together using high-speed fourth-generation NVLink interconnects.

The GPUs supported are listed in the following table.

The NVIDIA H200 and H100 Tensor Core GPUs deliver unprecedented performance, scalability and security to every data center and includes NVIDIA AI Enterprise software suite for streamlined AI development and deployment.

The NVIDIA H200 and H100 support granular power management by using the Total Graphics Power (TGP) setting. This setting determines what the maximum power each GPU can use, and in turn will dictate how many nodes can be installed in the enclosure and how hot the inlet water can be to properly cool all nodes.

Lenovo supports pre-set TGP of 500W, 600W and 700W. With full 350W processor configuration and the GPUs at 700W up to 40°C system inlet water temperature can be supported based on 4 lpm flow rate per tray. With the GPUs set to 600W, 45°C inlet water is supported. The supported trays per chassis are shown in the Power supplies section.

The desired TGP setting is executed in the factory by specifying the matching feature code in the configurator. The following table lists the feature codes that can be selected.

Tip: Total Graphics Power (TGP) is also called Continuous Electrical Design Point (EDPc). The peak EDP (EDPp) of the GPU can be as much as 80% higher than the EDPc. When adjusting the EDPc, the related EDPp is also adjusted in the same ratio. On top of changing the EDPc, the GPUs support setting a programmable EDP which is limiting the EDP peak to a minimum of 44% above the set EDPc.

Internal storage

The SD650-N V3 node supports one or two SSDs drives internally in the node. These are internal drives that are not front accessible and are not hot-swap. See the Components and connectors section for the location of the drives.

The SD650-N V3 supports either:

- 2x E3.S 1T drives

- 2x 2.5-inch 7mm drives

- 1x 2.5-inch 15mm drive

Configuration notes:

- The node only supports NVMe drives; SATA and SAS drives are not supported

- The drives are connected to onboard controllers; RAID functionality is provided by the operating system (VROC). Details are in the Intel VROC onboard RAID section.

- NVMe drives are connected to CPU 1 in all configurations

- When 2x 7mm or 2x E3.S drives are installed in a node, they are numbered drive 2 (bottom) and 3 (top). When 1x 15mm drive is installed, it is numbered 2.

In addition, the SD650-N V3 node a single high-performance M.2 NVMe drive, installed in an adapter mounted on top of the front processor. For details, see the M.2 drive section.

The feature codes to select the appropriate storage cage are listed in the following table:

The necessary storage cables are auto-derived by the configurator.

To upgrade systems installed in the field with storage options, there are separate kits available that contain both the cage and the necessary cables. The option part numbers of the upgrade kits are listed in the following table.

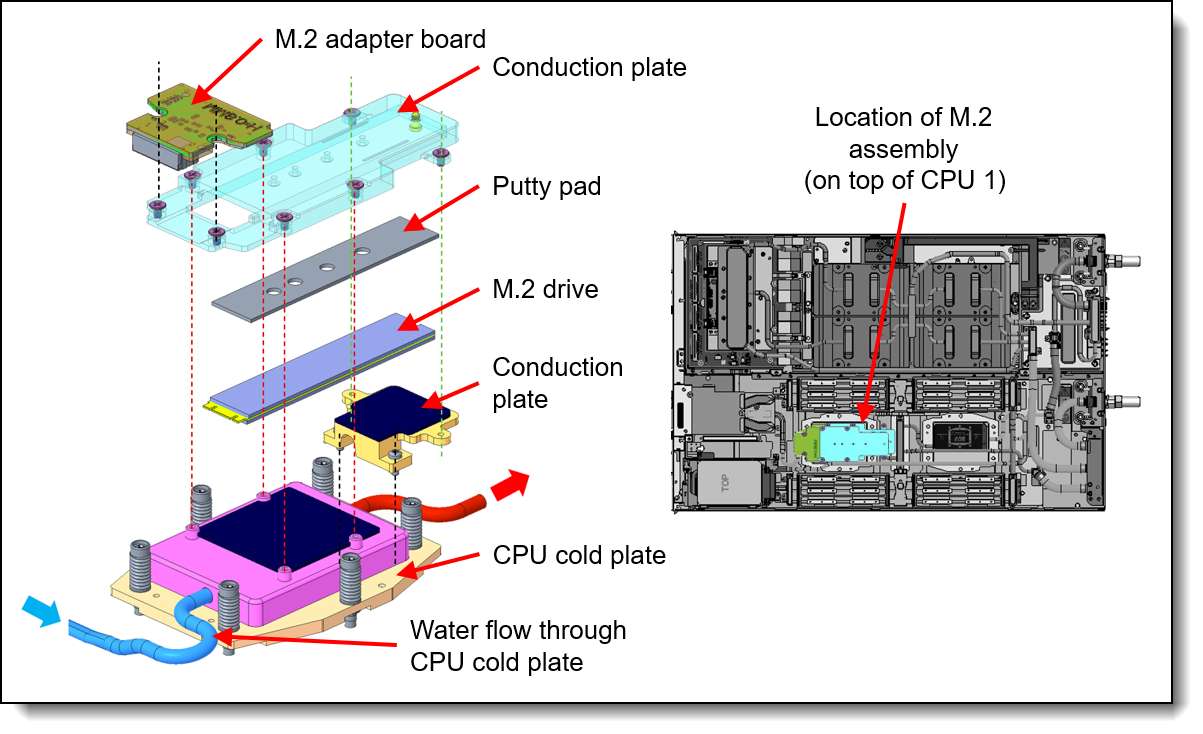

M.2 drive

The SD650-N V3 supports one M.2 form-factor NVMe drive for use as an operating system boot solution. The M.2 drive installs into an M.2 adapter which is mounted on top of the front processor in the node. See the internal view of the node in the Components and connectors section for the location of the M.2 drive.

PCIe x4 interface: In the SD650-N V3, the M.2 drive is connected to the processor using a PCIe x4 connection, which enables the M.2 drive to operate at the highest performance.

Figure 10. Components and location of the M.2 enablement kit

The ordering information of the M.2 adapter is listed in the following table. Supported drives are listed in the Internal drive options section.

Note: In the SD650-N V3, the M.2 adapter only supports NVMe drives. SATA M.2 drives are not supported

The M.2 enablement kit has the following features:

- Supports one NVMe M.2 drive

- Supports 80mm and 110mm drive form factors (2280 and 22110)

- PCIe 4.0 x4 NVMe interface to the drive

- Connects to CPU 1 via onboard NVMe connector

- Supports monitoring and reporting of events and temperature through I2C

- Firmware update via Lenovo firmware update tools

- Water-cooled via the attached cold plate

Intel VROC onboard RAID

Intel VROC (Virtual RAID on CPU) is a feature of the Intel processor that enables Integrated RAID support.

On the SD650-N V3, Intel VROC provides RAID functions for the onboard NVMe controller (Intel VROC NVMe RAID).

VROC NVMe RAID offers RAID support for any NVMe drives directly connected to the ports on the server's system board. On the SD650-N V3, RAID 0 and 1 are implemented.

The SD650-N V3 supports the VROC NVMe RAID offerings listed in the following table.

Configuration notes:

- If a feature code is ordered in a CTO build, the VROC functionality is enabled in the factory. For field upgrades, order a part number and it will be fulfilled as a Feature on Demand (FoD) license which can then be activated via the XCC management processor user interface.

- Intel VROC NVMe is supported on all Intel Xeon Scalable processors

Virtualization support: Virtualization support for Intel VROC is as follows:

- VROC (VMD) NVMe RAID: VROC (VMD) NVMe RAID is supported by ESXi, KVM, Xen, and Hyper-V. ESXi support is limited to RAID 1 only; other RAID levels are not supported. Windows and Linux OSes support VROC RAID NVMe, both for host boot functions and for guest OS function, and RAID-0, 1, 5, and 10 are supported. On ESXi, VROC is supported with both boot and data drives.

Controllers for internal storage

The drives of the SD650-N V3 are connected to an integrated NVMe storage controller:

- Onboard PCIe x4 NVMe ports

RAID functionality is provided by Intel VROC.

Internal drive options

The following tables list the drive options for internal storage of the server.

Trayless drives:

M.2 drives:

M.2 drive support: The use of M.2 drives requires an additional adapter as described in the M.2 drives subsection.

SED support: The tables include a column to indicate which drives support SED encryption. The encryption functionality can be disabled if needed. Note: Not all SED-enabled drives have "SED" in the description.

Optical drives

The server supports the external USB optical drive listed in the following table.

| Part number | Feature code | Description |

|---|---|---|

| 7XA7A05926 | AVV8 | ThinkSystem External USB DVD RW Optical Disk Drive |

The drive is based on the Lenovo Slim DVD Burner DB65 drive and supports the following formats: DVD-RAM, DVD-RW, DVD+RW, DVD+R, DVD-R, DVD-ROM, DVD-R DL, CD-RW, CD-R, CD-ROM.

I/O expansion options

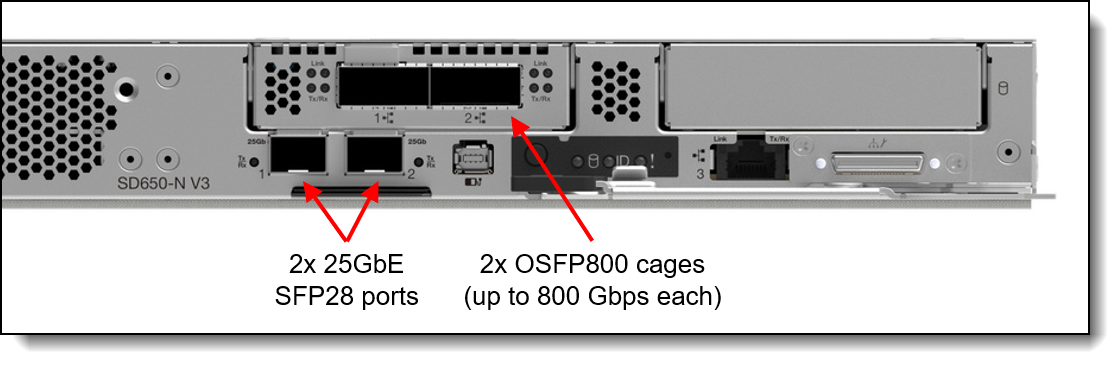

The SD650-N V3 offers I/O connectivity in the form of high-speed GPU Direct connections to the four NVIDIA GPUs in the system. These InfiniBand NDR connections with OSFP cages are in addition to two onboard 25 GbE ports with SRP28 cages.

The location of these ports is shown in the following figure.

Figure 11. SD650-N V3 networking

The server also supports an optional PCIe 5.0 x16 slot for additional networking capability. The slot is implemented using a 1-slot riser. Ordering information is in the following table.

| Part number | Feature code | Description |

|---|---|---|

| 4TA7A86675 | BKTE | ThinkSystem SD650, SD650-I V3 1U PCIe Riser |

The slot can alternatively be configured as internal drive bays, as described in the Internal storage section.

Network adapters

The SD650-N V3 has five network ports, one 1Gb, two 25Gb, and two 800Gb ports. The server optionally supports a network adapter in a PCIe slot.

Topics in this section:

Onboard 25Gb and 1Gb ports

The SD650-N V3 has three onboard network ports:

- 2x 25GbE ports, connected to an onboard Mellanox ConnectX-4 Lx controller, implemented with SFP28 cages for optical or copper connections. Supports 1Gb, 10Gb and 25Gb connections.

- 1x 1GbE port, connected to an onboard Intel I210 controller, implemented with an RJ45 port for copper cabling

Locations of these ports is shown in the Components and connectors section. The 1GbE port and 25GbE Port 1 both support NC-SI for remote management. For factory orders, to specify which ports should have NC-SI enabled, use the feature codes listed in the Remote Management section. If neither is chosen, both ports will have NC-SI disabled by default.

For the specifications of the 25GbE ports including the supported transceivers and cables, see the Mellanox ConnectX-4 product guide:

https://lenovopress.lenovo.com/lp0098-mellanox-connectx-4

OSFP800 ports

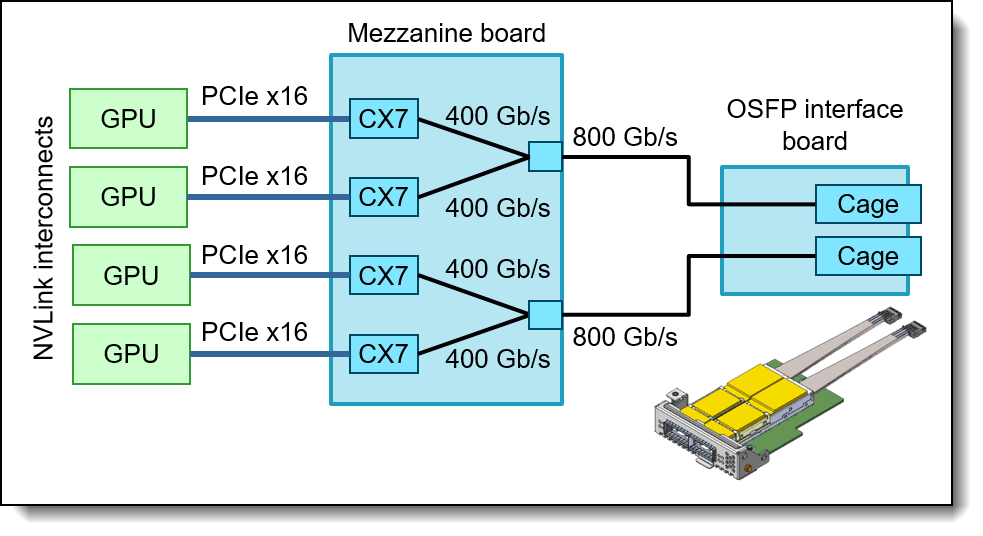

The SD650-N V3 includes an I/O mezzanine board containing four NVIDIA ConnectX-7 VPI network controllers. The board is automatically included in the order.

| Part number | Feature code | Description |

|---|---|---|

| CTO only | BQQU | ThinkSystem NVIDIA ConnectX-7 4-chip VPI PCIe Gen5 Mezz Controller |

The mezzanine board has two connectors where an OSFP board is attached via cables as shown in the following figure. The server makes use of OSFP-DD (double-density) connections to double the bandwidth from 400 Gb/s to 800 Gb/s per physical port.

Figure 12. GPU Direct connectivity in the SD650-N V3

The SD650-N V3 supports OSFP boards with either two double-400 Gb/s interfaces or two 400 Gb/s interfaces, resulting in full NDR InfiniBand or NDR200 InfiniBand bandwidth per GPU. The choices areas listed in the following table.

The following table lists the transceiver supported by ThinkSystem SD665-N, SD650-N V3 4x NDR Infiniband Interface (BRK8).

| Part number | Feature code |

Description | Max Qty |

|---|---|---|---|

| 4TC7A83365 | BQMJ | ThinkSystem NDRx2 OSFP800 IB Multi Mode Twin-Transceiver Flat Top | 2 |

For the specifications of the OSFP ports including the supported transceivers and cables, see the NVIDIA ConnectX-7 product guide:

https://lenovopress.lenovo.com/lp1692-thinksystem-nvidia-connectx-7-ndr-infiniband-osfp400-adapters

The following table lists the supported cables for ThinkSystem SD665-N, SD650-N V3 4x NDR Infiniband Interface, BRK8.

The following table lists the supported cables for ThinkSystem SD665-N, SD650-N V3 4x NDR200 Infiniband Interface, BRK9.

Cooling

One of the most notable features of the ThinkSystem SD650-N V3 offering is direct water cooling. Direct water cooling (DWC) is achieved by circulating the cooling water directly through cold plates that contact the CPU thermal case, DIMMs, and other high-heat-producing components in the node.

One of the main advantages of direct water cooling is the water can be relatively warm and still be effective because water conducts heat much more effectively than air. Depending on the server and power supply configuration as well as environmentals like water and air temperature, effectively 100% of the heat can be removed by water cooling; in configurations that stay slightly below that, the rest can be easily managed by a standard computer room air conditioner. Measured data at a customer data center shows 98% heat capture at 45°C water inlet temperature and 99% heat capture at 40°C water inlet temperature and 26.6°C ambient temperature with insulated racks using the SD650-N V2.

Allowable inlet temperatures for the water can be as high as 45°C (113°F) with the SD650-N V3. In most climates, water-side economizers can supply water at temperatures below 45°C for most of the year. This ability allows the data center chilled water system to be bypassed thus saving energy because the chiller is the most significant energy consumer in the data center. Typical economizer systems, such as dry-coolers, use only a fraction of the energy that is required by chillers, which produce 6-10 °C (43-50 °F) water. The facility energy savings are the largest component of the total energy savings that are realized when the SD650-N V3 is deployed.

The advantages of the use of water cooling over air cooling result from water’s higher specific heat capacity, density, and thermal conductivity. These features allow water to transmit heat over greater distances with much less volumetric flow and reduced temperature difference as compared to air.

For cooling IT equipment, this heat transfer capability is its primary advantage. Water has a tremendously increased ability to transport heat away from its source to a secondary cooling surface, which allows for large, more optimally designed radiators or heat exchangers rather than small, inefficient fins that are mounted on or near a heat source, such as a CPU.

The ThinkSystem SD650-N V3 offering uses the benefits of water by distributing it directly to the highest heat generating node subsystem components. By doing so, the offering realizes 7% - 10% direct energy savings when compared to an air-cooled equivalent. That energy savings results from the removal of the system fans and the lower operating temp of the direct water-cooled system components.

The direct energy savings at the enclosure level, combined with the potential for significant facility energy savings, makes the SD650-N V3 an excellent choice for customers that are burdened by high energy costs or with a sustainability mandate.

Water is delivered to each of the nodes from a coolant distribution unit (CDU) via the water manifold. As shown in the following figure, each manifold section attaches to an enclosure and connects directly to the water inlet and outlet connectors for each compute node to deliver water safely and reliably to and from each server tray.

The DWC Manifold is modular and is available in multiple configurations that are based on the number of enclosure drops that are required in a rack. The Manifold scales to support up to six Enclosures in a single rack, as shown in the following figure. Ordering information for the water manifold is in the Manifold assembly section.

Figure 13. DW612S enclosure and manifold assembly

The water flows through the SD650-N V3 tray to cool all major heat-producing components. The inlet water is split into two parallel paths, one for each node in the tray. Each path is then split further to cool the processors, memory, drives (including the M.2 drive) and adapters.

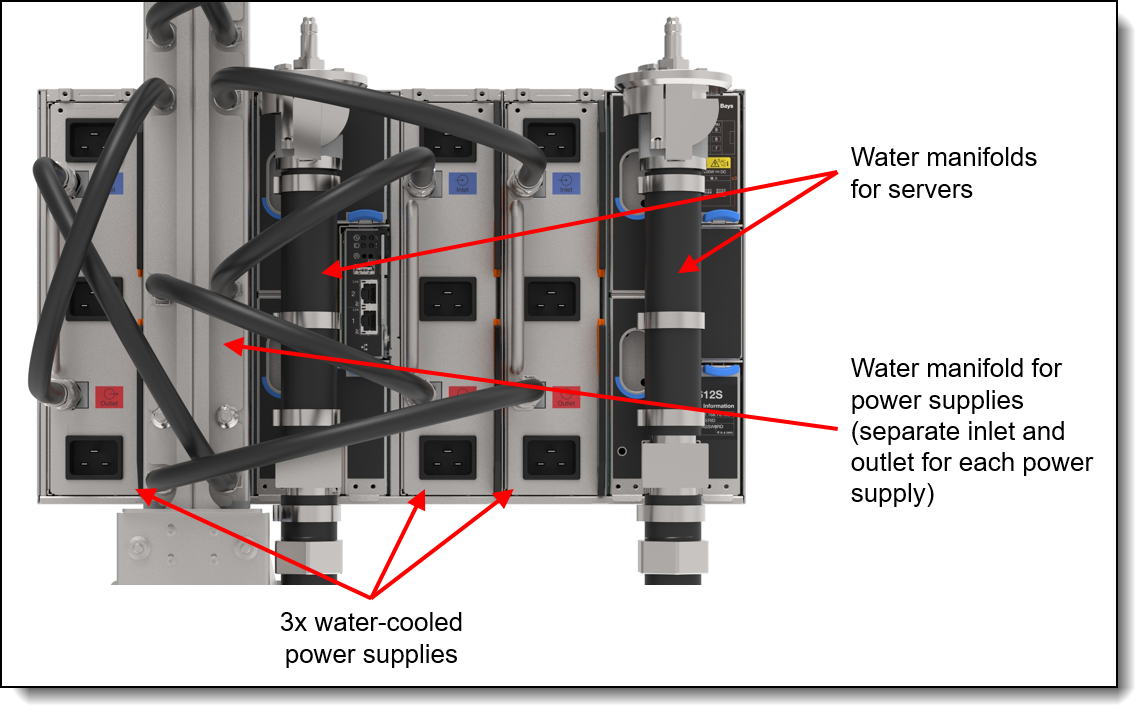

When the DW612S is configured with water-cooled power supplies, an additional water manifold is used to supply water to each of the three power supplies, as shown in the following figure. Ordering information for the manifold is in the Manifold assembly section.

Figure 14. DW612S enclosure with water-cooled power supplies and manifold

During the manufacturing and test cycle, Lenovo’s water-cooled nodes are pressure tested with Helium according to ASTM E499 / E499M – 11 (Standard Practice for Leaks Using the Mass Spectrometer Leak Detector in the Detector Probe Mode) and later again with Nitrogen to detect micro-leaks which may be undetectable by pressure testing with water and/or a water/glycol mixture as Helium and Nitrogen have smaller molecule sizes.

This approach also allows Lenovo to ship the systems pressurized without needing to send hazardous antifreeze-components to our customers.

Onsite the materials used within the water loop from the CDU to the nodes should be limited to copper alloys with brazed joints, Stainless steels with TIG and MIG welded joints and EPDM rubber. In some instances, PVC might be an acceptable choice within the facility.

The water the system is filled with must be reasonably clean, bacteria-free water (< 100 CFU/ml) such as de-mineralized water, reverse osmosis water, de-ionized water, or distilled water. It must be filtered with in-line 50 micron filter. Biocide and Corrosion inhibitors ensure a clean operation without microbiological growth or corrosion.

Lenovo Data Center Power and Cooling Services can support you in the design, implementation and maintenance of the facility water-cooling infrastructure.

Power supplies

The DW612S enclosure supports air-cooled or water-cooled power supplies. The use of water-cooled power supplies enables an even greater amount of heat can be removed from the data center using water instead of air-conditioning.

The DW612S with SD650-N V3 servers installed support the following power supply quantities:

- 9x air-cooled power supplies, each with one power connector

- 3x water-cooled power supplies, each with three power connectors

Tip: Use Lenovo Capacity Planner to determine the power needs for your rack installation. See the Lenovo Capacity Planner section for details.

The power supplies provide N+1 redundancy (water-cooled power supplies each count as 3), depending on population and configuration of the node trays. Power policies with no redundancy also are supported. Water-cooled power supply units contain 3 discreet power supplies, which means that with 3 water-cooled power supply units, 8+1 redundancy is supported.

Topics in this section:

- Power supply layout

- Power supply ordering information

- Power output

- Limitations based on GPU power requirements

- Power cables

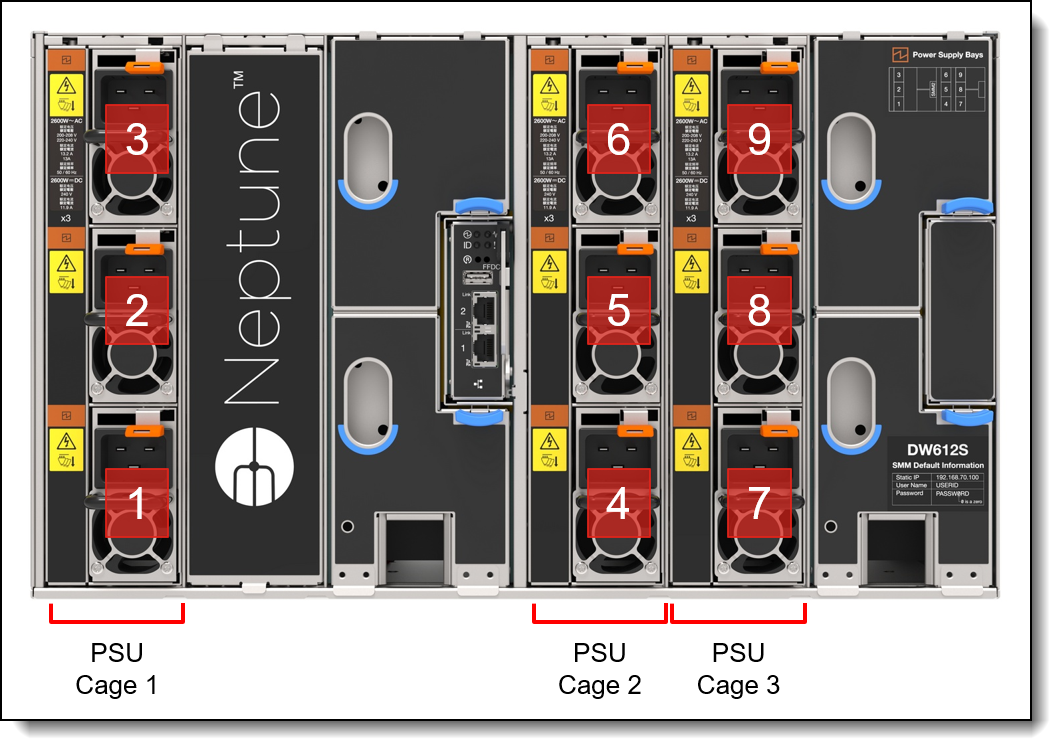

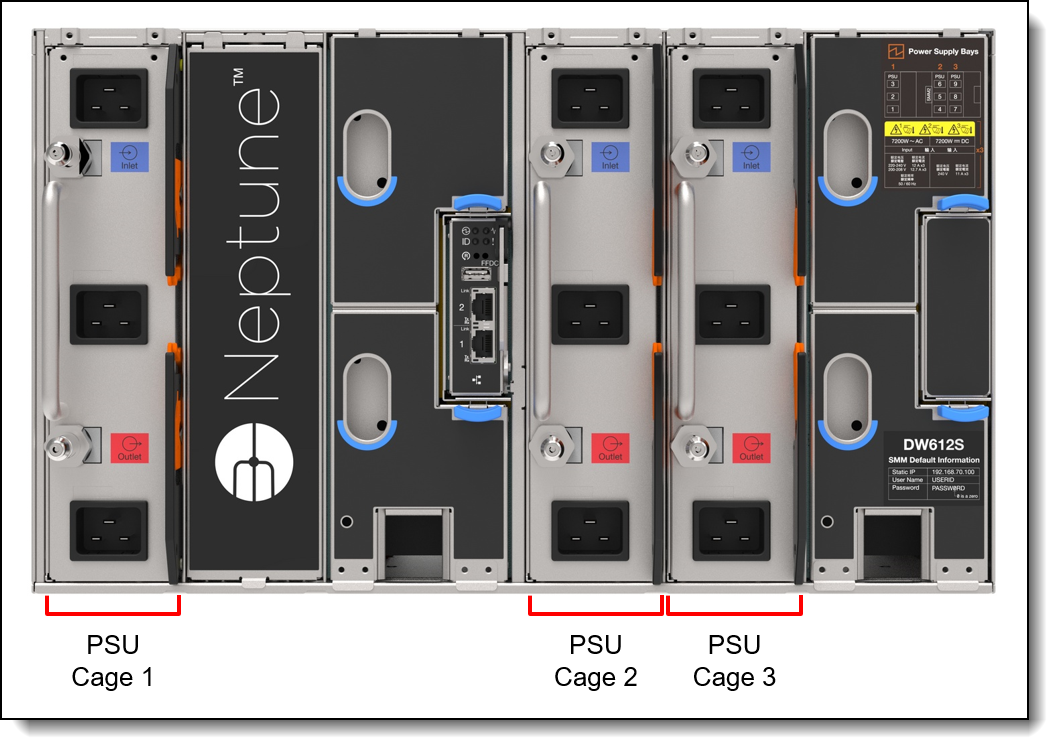

Power supply layout

Power supplies are implemented in the DW612S enclosure in vertical cages, with three air-cooled power supplies or one water-cooled power supply in each cage. The following figure shows nine air-cooled power supplies installed in three cages.

Figure 15. Power supplies and cages in the DW612S enclosure (shown with 9 air-cooled power supplies)

The following figure shows the DW612S with three water-cooled power supplies installed.

Figure 16. Power supplies and cages in the DW612S enclosure (shown with 3 water-cooled power supplies)

Power supply ordering information

The following table lists the supported power supplies for use in the DW612S enclosure with SD650-N V3 nodes installed. Mixing of power supply capacities (different part number) is not supported.

* Only offered in USA; not available in other markets

The power supply units have the following features:

- 80 PLUS Platinum or Titanium certified as listed in the table above

- Supports N+1 power redundancy or non-redundant power configurations:

- For air-cooled power supplies: 8+1

- For water-cooled power supplies: 8+1

- Power management configured through the SMM

- Integrated 25K RPM fan

- Built-in overload and surge protection

- 230V AC power supplies support high-range voltage only; 110V AC not supported

Power output

The power rating of each power supply (2600W) is dependent on the voltage of the input supply. A 208V supply will be able to generate less power than a 240V supply for example. You will need to take this into consideration when determining your power needs. The following table provides the details for each supported power supply unit. A yellow cell indicates lower power availability than the rated power.

Limitations based on GPU power requirements

The following table shows the power limits based on the configured Peak EDP (EDPp) setting for a high-end dual-socket configuration (2x Intel Xeon Platinum 8480+ processors, NVIDIA H100 SXM5 700W 94G HBM2e GPU Board, 16x 32GB memory).

Power cables

The power supplies in the DW612S enclosure have C19 connectors and support the following rack power cables.

For the HVAC power supply, the rack power cable listed in the following table is supported.

System Management

The SD650-N V3 contains an integrated service processor, XClarity Controller 2 (XCC), which provides advanced control, monitoring, and alerting functions. The XCC2 is based on the AST2600 baseboard management controller (BMC) using a dual-core ARM Cortex A7 32-bit RISC service processor running at 1.2 GHz.

Topics in this section:

- Local console

- External Diagnostics Handset

- System status with XClarity Mobile

- Remote management

- XCC2 Platinum

- Remote management using the SMM

- Lenovo HPC & AI Software Stack

- Lenovo XClarity Provisioning Manager

- Lenovo XClarity Essentials

- Lenovo XClarity Administrator

- Lenovo XClarity Integrators

- Lenovo XClarity Energy Manager

- Lenovo Capacity Planner

Local console

The SD650-N V3 node supports a local console with the use of a console breakout cable. The cable connects to the port on the front of the node as shown in the following figure.

Figure 17. Console breakout cable

The cable has the following connectors:

- VGA port

- Serial port

- USB 3.1 Gen 1 (5 Gb/s) port

Tip: USB 3.0 was renamed to USB 3.1 Gen 1 by the USB Implementers Forum. The terms "USB 3.0" and "USB 3.1 Gen 1" are used interchangeably - both offer a 5 Gb/s USB connection.

As well as local console functions, the USB port on the breakout cable also supports the use of the XClarity Mobile app as described in the next section.

Ordering information for the cable is listed in the following table.

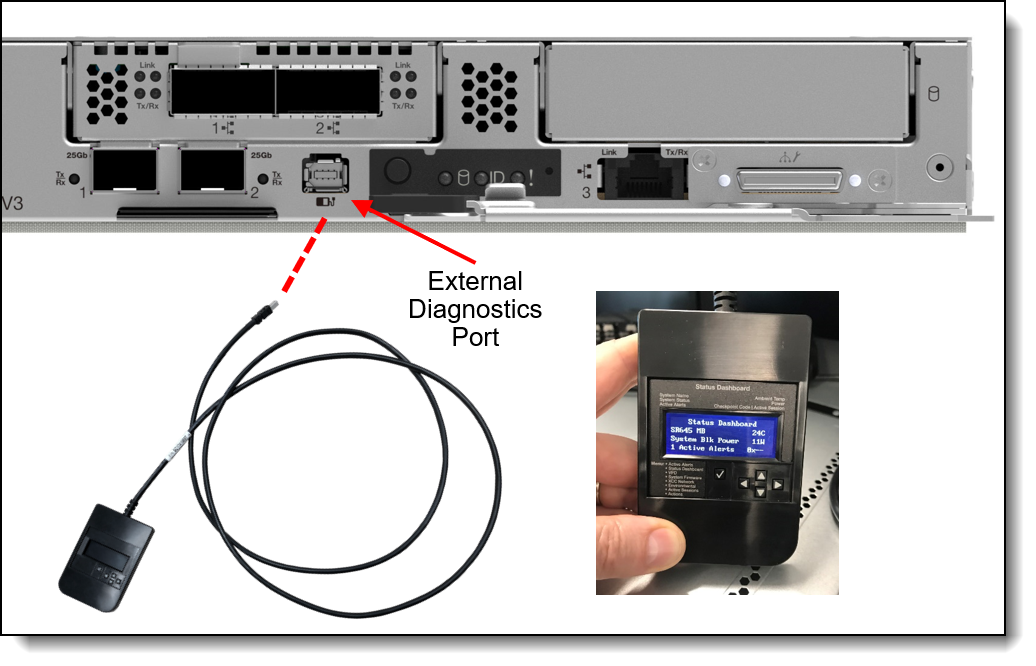

External Diagnostics Handset

The SD650-N V3 has a port to connect an External Diagnostics Handset as shown in the following figure.

The External Diagnostics Handset allows quick access to system status, firmware, network, and health information. The LCD display on the panel and the function buttons give you access to the following information:

- Active alerts

- Status Dashboard

- System VPD: machine type & mode, serial number, UUID string

- System firmware levels: UEFI and XCC firmware

- XCC network information: hostname, MAC address, IP address, DNS addresses

- Environmental data: Ambient temperature, CPU temperature, AC input voltage, estimated power consumption

- Active XCC sessions

- System reset action

The handset has a magnet on the back of it to allow you to easily mount it on a convenient place on any rack cabinet.

Figure 18. SD650-N V3 External Diagnostics Handset

Ordering information for the External Diagnostics Handset with is listed in the following table.

System status with XClarity Mobile

The XClarity Mobile app includes a tethering function where you can connect your Android or iOS device to the server via USB to see the status of the server.

The steps to connect the mobile device are as follows:

- Enable USB Management on the server, by holding down the ID button for 3 seconds (or pressing the dedicated USB management button if one is present)

- Connect the mobile device via a USB cable to the server's USB port with the management symbol

- In iOS or Android settings, enable Personal Hotspot or USB Tethering

- Launch the Lenovo XClarity Mobile app

Once connected you can see the following information:

- Server status including error logs (read only, no login required)

- Server management functions (XClarity login credentials required)

Remote management

The 1Gb onboard port and one of the 25Gb onboard ports (port 1) on the front of the SD650-N V3 offer a connection to the XCC for remote management. This shared-NIC functionality allows the ports to be used both for operating system networking and for remote management.

Remote server management is provided through industry-standard interfaces:

- Intelligent Platform Management Interface (IPMI) Version 2.0

- Simple Network Management Protocol (SNMP) Version 3 (no SET commands; no SNMP v1)

- Common Information Model (CIM-XML)

- Representational State Transfer (REST) support

- Redfish support (DMTF compliant)

- Web browser - HTML 5-based browser interface (Java and ActiveX not required) using a responsive design (content optimized for device being used - laptop, tablet, phone) with NLS support

The 1Gb port and 25Gb Port 1 support NC-SI. You can enable NC-SI in the factory using the feature codes listed in the following table. If neither feature code is selected, both ports will have NC-SI disabled.

IPMI via the Ethernet port (IPMI over LAN) is supported, however it is disabled by default. For CTO orders you can specify whether you want to the feature enabled or disabled in the factory, using the feature codes listed in the following table.

XCC2 Platinum

The XCC2 service processor in the SD650-N V3 supports an upgrade to the Platinum level of features. Compared to the XCC functions of ThinkSystem V2 and earlier systems, Platinum adds the same features as Enterprise and Advanced levels in ThinkSystem V2, plus additional features.

XCC2 Platinum adds the following Enterprise and Advanced functions:

- Remotely viewing video with graphics resolutions up to 1600x1200 at 75 Hz with up to 23 bits per pixel, regardless of the system state

- Remotely accessing the server using the keyboard and mouse from a remote client

- International keyboard mapping support

- Syslog alerting

- Redirecting serial console via SSH

- Component replacement log (Maintenance History log)

- Access restriction (IP address blocking)

- Lenovo SED security key management

- Displaying graphics for real-time and historical power usage data and temperature

- Boot video capture and crash video capture

- Virtual console collaboration - Ability for up to 6 remote users to be log into the remote session simultaneously

- Remote console Java client

- Mapping the ISO and image files located on the local client as virtual drives for use by the server

- Mounting the remote ISO and image files via HTTPS, SFTP, CIFS, and NFS

- Power capping

- System utilization data and graphic view

- Single sign on with Lenovo XClarity Administrator

- Update firmware from a repository

- License for XClarity Energy Manager

XCC2 Platinum also adds the following features that are new to XCC2:

- System Guard - Monitor hardware inventory for unexpected component changes, and simply log the event or prevent booting

- Enterprise Strict Security mode - Enforces CNSA 1.0 level security

- Neighbor Group - Enables administrators to manage and synchronize configurations and firmware level across multiple servers

Ordering information is listed in the following table. XCC2 Platinum is a software license upgrade - no additional hardware is required.

| Part number | Feature code | Description |

|---|---|---|

| 7S0X000DWW | S91X | Lenovo XClarity XCC2 Platinum Upgrade |

| 7S0X000KWW | SBCV | Lenovo XClarity Controller 2 (XCC2) Platinum Upgrade |

With XCC2 Platinum, for CTO orders, you can request that System Guard be enabled in the factory and the first configuration snapshot be recorded. To add this to an order, select feature code listed in the following table. The selection is made in the Security tab of the DCSC configurator.

| Feature code | Description |

|---|---|

| BUT2 | Install System Guard |

For more information about System Guard, see https://pubs.lenovo.com/xcc2/NN1ia_c_systemguard

Remote management using the SMM

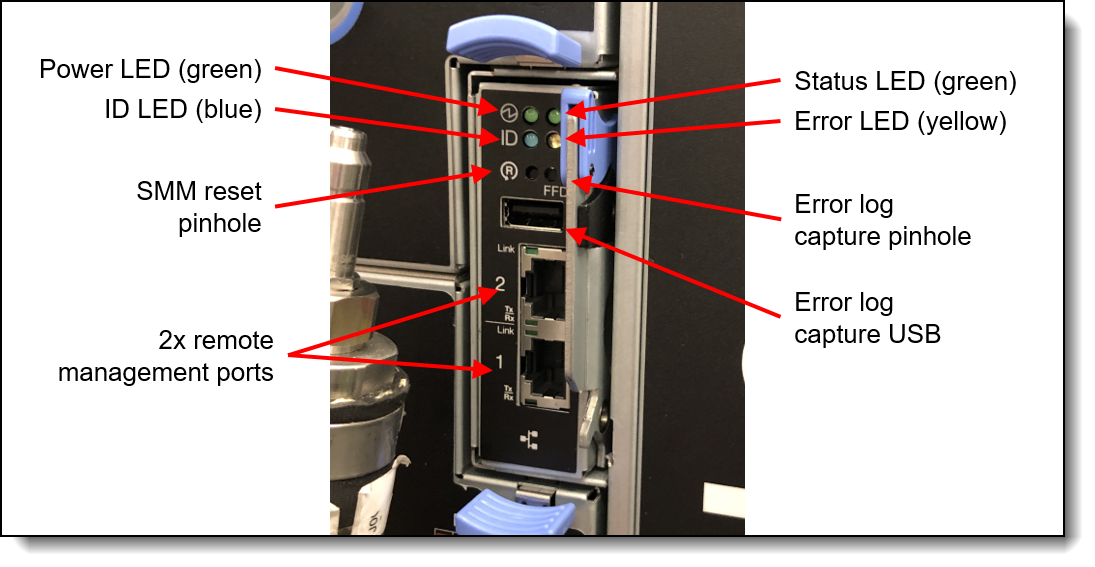

The DW612S enclosure includes a System Management Module 2 (SMM), installed in the rear of the enclosure. See Enclosure rear view for the location of the SMM. The SMM provides remote management of both the enclosure and the individual servers installed in the enclosure. The SMM can be accessed through a web browser interface and via Intelligent Platform Management Interface (IPMI) 2.0 commands.

The SMM provides the following functions:

- Remote connectivity to XCC controllers in each node in the enclosure

- Node-level reporting and control (for example, node virtual reseat/reset)

- Enclosure power management

- Enclosure thermal management

- Enclosure inventory

The following figure shows the LEDs and connectors of the SMM.

The SMM has the following ports and LEDs:

- 2x Gigabit Ethernet RJ45 ports for remote management access

- USB port and activation button for service

- SMM reset button

- System error LED (yellow)

- Identification (ID) LED (blue)

- Status LED (green)

- System power LED (green)

The USB service button and USB service port are used to gather service data in the event of an error. Pressing the service button copies First Failure Data Collection (FFDC) data to a USB key installed in the USB service port. The reset button is used to perform an SMM reset (short press) or to restore the SMM back to factory defaults (press for 4+ seconds).

The use of two RJ45 Ethernet ports enables the ability to daisy-chain the Ethernet management connections thereby reducing the number of ports you need in your management switches and reducing the overall cable density needed for systems management. With this feature you can connect the first SMM to your management network and the SMM in a second enclosure connects to the first SMM. The SMM in the third enclosure can then connect to the SMM in the second enclosure.

Up to 7 enclosures can be connected in a daisy-chain configuration and all servers in those enclosures can be managed remotely via one single Ethernet connection.

Notes:

- If you are using IEEE 802.1D spanning tree protocol (STP) then at most 6 enclosures can be connected together

- Do not form a loop with the network cabling. The dual-port SMM at the end of the chain should not be connected back to the switch that is connected to the top of the SMM chain.

Lenovo HPC & AI Software Stack

The Lenovo HPC & AI Software Stack combines open-source with proprietary best-of-breed Supercomputing software to provide the most consumable open-source HPC software stack embraced by all Lenovo HPC customers.

It provides a fully tested and supported, complete but customizable HPC software stack to enable the administrators and users in optimally and environmentally sustainable utilizing their Lenovo Supercomputers.

The Lenovo HPC & AI Software Stack is built on the most widely adopted and maintained HPC community software for orchestration and management. It integrates third party components especially around programming environments and performance optimization to complement and enhance the capabilities, creating the organic umbrella in software and service to add value for our customers.

The key open-source components of the software stack are as follows:

- Confluent Management

Confluent is Lenovo-developed open-source software designed to discover, provision, and manage HPC clusters and the nodes that comprise them. Confluent provides powerful tooling to deploy and update software and firmware to multiple nodes simultaneously, with simple and readable modern software syntax.

- SLURM Orchestration

Slurm is integrated as an open source, flexible, and modern choice to manage complex workloads for faster processing and optimal utilization of the large-scale and specialized high-performance and AI resource capabilities needed per workload provided by Lenovo systems. Lenovo provides support in partnership with SchedMD.

- LiCO Webportal