Abstract

The Flex System™ IB6132D 2-port FDR InfiniBand Adapter is a two-port mid-mezzanine card for the Flex System x222 Compute Node. It delivers low latency and high bandwidth for performance-driven server clustering applications in enterprise data centers, high-performance computing (HPC), and embedded environments. The adapter is designed to operate at InfiniBand FDR speeds (56 Gbps or 14 Gbps per lane) and provides one 56 Gbps port to each of the independent servers in the x222 Compute Node.

Note: This adapter is withdrawn from marketing.

Introduction

The Flex System™ IB6132D 2-port FDR InfiniBand Adapter is a two-port mid-mezzanine card for the Flex System x222 Compute Node. It delivers low latency and high bandwidth for performance-driven server clustering applications in enterprise data centers, high-performance computing (HPC), and embedded environments. The adapter is designed to operate at InfiniBand FDR speeds (56 Gbps or 14 Gbps per lane) and provides one 56 Gbps port to each of the independent servers in the x222 Compute Node.

The following figure shows the Flex System IB6132D 2-port FDR InfiniBand Adapter.

Figure 1. Flex System IB6132D 2-port FDR InfiniBand Adapter

Did you know?

Mellanox InfiniBand adapters deliver industry-leading bandwidth with ultra-low, submicrosecond latency for performance-driven server clustering applications. Combined with the IB6131 InfiniBand Switch, your organization can achieve efficient computing by offloading, from the CPU, protocol processing, and data movement impact, such as Remote Direct Memory Access (RDMA) and Send/Receive semantics, allowing more processor power for the application. Advanced acceleration technology enables more than 90,000,000 Message Passing Interface (MPI) messages per second, making it a highly scalable adapter that delivers cluster efficiency and scalability to tens-of-thousands of nodes.

Part number information

The following table shows the part number to order this card.

Table 1. Part number and feature code for ordering

| Description | Part number | Feature code |

| Flex System IB6132D 2-port FDR InfiniBand Adapter | 90Y3486 | A365 |

The part number includes the following items:

- One Flex System IB6132D 2-port FDR InfiniBand Adapter

- A documentation CD containing the adapter user’s guide

- The Important Notices document

Features

The Flex System IB6132D 2-port FDR InfiniBand Adapter has the following features.

Performance

Based on Mellanox ConnectX-3 technology, the IB6132D 2-port FDR InfiniBand Adapter provides a high level of throughput performance for all network environments by removing I/O bottlenecks in mainstream servers that are limiting application performance. Servers can achieve up to 56 Gbps transmit and receive bandwidth. Hardware-based InfiniBand transport and IP over InfiniBand (IPoIB) stateless offload engines handle the segmentation, reassembly, and checksum calculations that otherwise burden the host processor.

RDMA over the InfiniBand fabric further accelerates application run time while reducing CPU utilization. RDMA allows very high-volume transaction-intensive applications typical of HPC and financial market firms, as well as other industries where speed of data delivery is paramount. With the ConnectX-3-based adapter, highly compute-intensive tasks running on hundreds or thousands of multiprocessor nodes, such as climate research, molecular modeling, and physical simulations, can share data and synchronize faster, resulting in shorter run times. High-frequency transaction applications are able to access trading information more quickly, making sure that the trading servers are able to respond first to any new market data and market inefficiencies, while the higher throughput enables higher volume trading, maximizing liquidity and profitability.

In data mining or web crawl applications, RDMA provides the needed boost in performance to search faster by solving the network latency bottleneck that is associated with I/O cards and the corresponding transport technology in the cloud. Various other applications that benefit from RDMA with ConnectX-3 include Web 2.0 (Content Delivery Network), Business Intelligence, database transactions, and various cloud-computing applications. The low-power consumption of Mellanox ConnectX-3 provides clients with high bandwidth and low latency at the lowest cost of ownership.

I/O virtualization

Mellanox adapters that use Virtual Intelligent Queuing (Virtual-IQ) technology with SR-IOV provide dedicated adapter resources and ensured isolation and protection for virtual machines (VM) within the server. I/O virtualization on InfiniBand gives data center managers better server utilization and LAN and SAN unification while reducing cost, power, and cable complexity.

Quality of service

Resource allocation per application or per VM is provided by the advanced quality of service (QoS) that is supported by ConnectX-3. Service levels for multiple traffic types can be assigned on a per flow basis, allowing system administrators to prioritize traffic by application, virtual machine, or protocol. This powerful combination of QoS and prioritization provides the ultimate fine-grained control of traffic, ensuring that applications run smoothly in today’s complex environments.

Specifications

The Flex System IB6132D 2-port FDR InfiniBand Adapter has the following specifications:

- Based on Mellanox Connect-X3 technology.

- Two independent Mellanox ASICs, one port per ASIC.

- Two-port card, with one port routed to each of the independent servers in the x222 Compute Node.

- Each port operates at up to 56 Gbps.

- InfiniBand Architecture Specification V1.2.1 compliant.

- Supported InfiniBand speeds (auto-negotiated):

- 1X/2X/4X Single Data Rate (SDR) (2.5 Gbps per lane)

- Double Data Rate (DDR) (5 Gbps per lane)

- Quad Data Rate (QDR) (10 Gbps per lane)

- FDR10 (40 Gbps, 10 Gbps per lane)

- Fourteen Data Rate (FDR) (56 Gbps, 14 Gbps per lane)

- PCI Express 3.0 x8 host-interface operates at up to 8 gigatransfers per second (GTps) bandwidth.

- CPU offload of transport operations.

- CORE-Direct® application offload.

- GPUDirect application offload.

- End-to-end QoS and congestion control.

- Hardware-based I/O virtualization.

- TCP/UDP/IP stateless offload.

- Ethernet encapsulation (EoIB).

- RoHS-6 compliant.

Note: To operate at InfiniBand FDR speeds, the Flex System IB6131 InfiniBand Switch requires the FDR Update license, 90Y3462.

Supported servers

The following table lists the Flex System compute nodes that support the IB6132D 2-port FDR InfiniBand Adapter.

Table 2. Supported servers

| Description | Part number | ||||||||

|---|---|---|---|---|---|---|---|---|---|

| Flex System IB6132D 2-port FDR InfiniBand Adapter | 90Y3486 | N | Y | N |

N

|

N | N | N |

N

|

For the latest information about the expansion cards that are supported by each blade server type, see ServerProven® at the following web address:

http://www.lenovo.com/us/en/serverproven/flexsystem.shtml

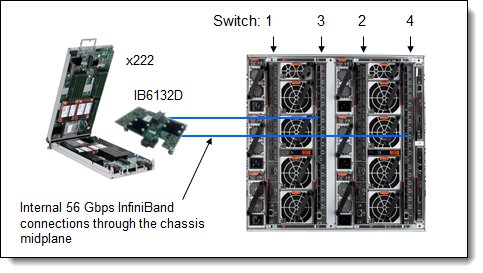

Mid-mezzanine cards, such as the IB6132D, are installed in the x222, as shown in the following figure. Only one adapter can be installed, but the adapter can connect to both servers in the x222 Compute Node.

Figure 2. The adapter installed in the Flex System x222 Compute Node

Supported I/O modules

The IB6132D 2-port FDR InfiniBand Adapter supports the I/O module that is listed in the following table. To operate the IB6131 InfiniBand Switch at FDR speeds (56 Gbps), you must also install the FDR Upgrade license, 90Y3462.

Table 3. I/O modules that are supported by the IB6132D 2-port FDR InfiniBand Adapter

| Description | Part number |

| Flex System IB6131 InfiniBand Switch | 90Y3450 |

| Flex System IB6131 InfiniBand Switch (FDR Upgrade)* | 90Y3462 |

* This license allows the switch to support FDR speeds.

A switch module must be installed in both bays 3 and 4 in the chassis. As shown in the following table, the upper compute node in the x222 routes through the card to the switch in bay 4 and the lower compute node in the x222 routes through the card to the switch in bay 3. The adapter has two ports, and each port is driven by its own ASIC.

Table 4. Adapter to I/O bay correspondence

| Compute node | IB6132D 2-port FDR InfiniBand |

Corresponding I/O module bay in the chassis |

| Upper compute node | Upper Port 1 | Module bay 4 |

| Lower compute node | Lower Port 1 | Module bay 3 |

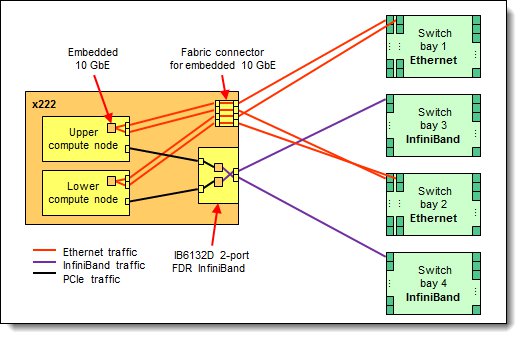

The IB6132D 2-port FDR InfiniBand adapter is installed in the single I/O expansion slot in the x222. The following figure shows how the IB6132D 2-port FDR InfiniBand adapter is connected to InfiniBand switches that are installed in the chassis. The figure also shows the four ports of the two Embedded 10 GbE Virtual Fabric Adapters that are routed to the Ethernet switches in bays 1 and 2.

Figure 3. Logical layout of the x222 interconnects - Ethernet and InfiniBand

Supported operating systems

The IB6132D 2-port FDR InfiniBand Adapter supports the following 64-bit operating systems:

- Microsoft Windows Server 2008 R2

- Microsoft Windows Server 2012 R2

- Red Hat Enterprise Linux 5 Server x64 Edition

- Red Hat Enterprise Linux 6 Server x64 Edition

- SUSE LINUX Enterprise Server 10 for AMD64/EM64T

- SUSE LINUX Enterprise Server 11 for AMD64/EM64T

- VMware ESX 4.1

- VMware vSphere 5.0 (ESXi)

Regulatory compliance

The adapter conforms to the following standards:

- United States FCC 47 CFR Part 15, Subpart B, ANSI C63.4 (2003), Class A

- United States UL 60950-1, Second Edition

- IEC/EN 60950-1, Second Edition

- FCC - Verified to comply with Part 15 of the FCC Rules, Class A

- Canada ICES-003, issue 4, Class A

- UL/IEC 60950-1

- CSA C22.2 No. 60950-1-03

- Japan VCCI, Class A

- Australia/New Zealand AS/NZS CISPR 22:2006, Class A

- IEC 60950-1(CB Certificate and CB Test Report)

- Taiwan BSMI CNS13438, Class A

- Korea KN22, Class A; KN24

- Russia/GOST ME01, IEC-60950-1, GOST R 51318.22-99, GOST R 51318.24-99, GOST R 51317.3.2-2006, and GOST R 51317.3.3-99

- IEC 60950-1 (CB Certificate and CB Test Report)

- CE Mark (EN55022 Class A, EN60950-1, EN55024, EN61000-3-2, and EN61000-3-3)

- CISPR 22, Class A

Physical specifications

The dimensions and weight of the adapter are as follows:

- Width: 158 mm (6.2 in.)

- Depth: 108 mm (4.2 in.)

- Weight: 230 g (0.5 lb)

Shipping dimensions and weight (approximate):

- Height: 97 mm (3.8 in.)

- Width: 165 mm (6.5 in.)

- Depth: 215 mm (8.5 in.)

- Weight: 430 g (0.95 lb)

Popular configurations

The IB6132D 2-port FDR InfiniBand Adapter is used with the IB6131 InfiniBand Switch. The following figure shows one IB6132D adapter that is installed in an x222 Compute Node, which in turn is installed in the chassis. Two IB6131 InfiniBand Switches are installed in I/O bays 3 and 4.

Figure 4. Example configuration

The following table lists the parts that are used in the configuration. This configuration includes the FDR upgrade license for the IB6131 switch, as well as the FDR cables.

Table 5. Components that are used when connecting the IB6132D 2-port FDR InfiniBand Adapter to the IB6131 InfiniBand Switches

| Part number/machine type | Description | Quantity |

| 7916 | Flex System x222 Compute Node | 1 - 14 |

| 90Y3486 | IB6132D 2-port FDR InfiniBand Adapter | 1 per x222 Compute Node |

| 8721-A1x | Flex System Enterprise Chassis | 1 |

| 90Y3450 | Flex System IB6131 InfiniBand Switch (in bays 3 and 4) | 2 |

| 90Y3462 | Flex System IB6131 InfiniBand Switch (FDR Upgrade) | 2 |

| 90Y3470 | 3m FDR InfiniBand Cable | Up to 36 (18 per switch) |

Related product families

Product families related to this document are the following:

Trademarks

Lenovo and the Lenovo logo are trademarks or registered trademarks of Lenovo in the United States, other countries, or both. A current list of Lenovo trademarks is available on the Web at https://www.lenovo.com/us/en/legal/copytrade/.

The following terms are trademarks of Lenovo in the United States, other countries, or both:

Lenovo®

ServerProven®

The following terms are trademarks of other companies:

Linux® is the trademark of Linus Torvalds in the U.S. and other countries.

Microsoft®, Windows Server®, and Windows® are trademarks of Microsoft Corporation in the United States, other countries, or both.

ibm.com® is a trademark of IBM in the United States, other countries, or both.

Other company, product, or service names may be trademarks or service marks of others.